Note

Click here to download the full example code

Multistart optimization¶

Runs simple optimization problem with multiple starting points

Nests a MDOScenario in a DOEScenario

using a MDOScenarioAdapter.

from __future__ import absolute_import, division, print_function, unicode_literals

from future import standard_library

from gemseo.api import (

configure_logger,

create_design_space,

create_discipline,

create_scenario,

)

from gemseo.core.mdo_scenario import MDOScenarioAdapter

configure_logger()

standard_library.install_aliases()

Create the disciplines¶

objective = create_discipline(

"AnalyticDiscipline", expressions_dict={"obj": "x**3-x+1"}

)

constraint = create_discipline(

"AnalyticDiscipline", expressions_dict={"cstr": "x**2+obj**2-1.5"}

)

Create the design space¶

design_space = create_design_space()

design_space.add_variable("x", 1, l_b=-1.5, u_b=1.5, value=1.5)

Create the MDO scenario¶

scenario = create_scenario(

[objective, constraint],

formulation="DisciplinaryOpt",

objective_name="obj",

design_space=design_space,

)

scenario.default_inputs = {"algo": "SLSQP", "max_iter": 10}

scenario.add_constraint("cstr", "ineq")

Create the scenario adapter¶

dv_names = scenario.formulation.opt_problem.design_space.variables_names

adapter = MDOScenarioAdapter(

scenario, dv_names, ["obj", "cstr"], set_x0_before_opt=True

)

Create the DOE scenario¶

scenario_doe = create_scenario(

adapter,

formulation="DisciplinaryOpt",

objective_name="obj",

design_space=design_space,

scenario_type="DOE",

)

scenario_doe.add_constraint("cstr", "ineq")

run_inputs = {"n_samples": 10, "algo": "fullfact"}

scenario_doe.execute(run_inputs)

Out:

{'eval_jac': False, 'algo': 'fullfact', 'n_samples': 10}

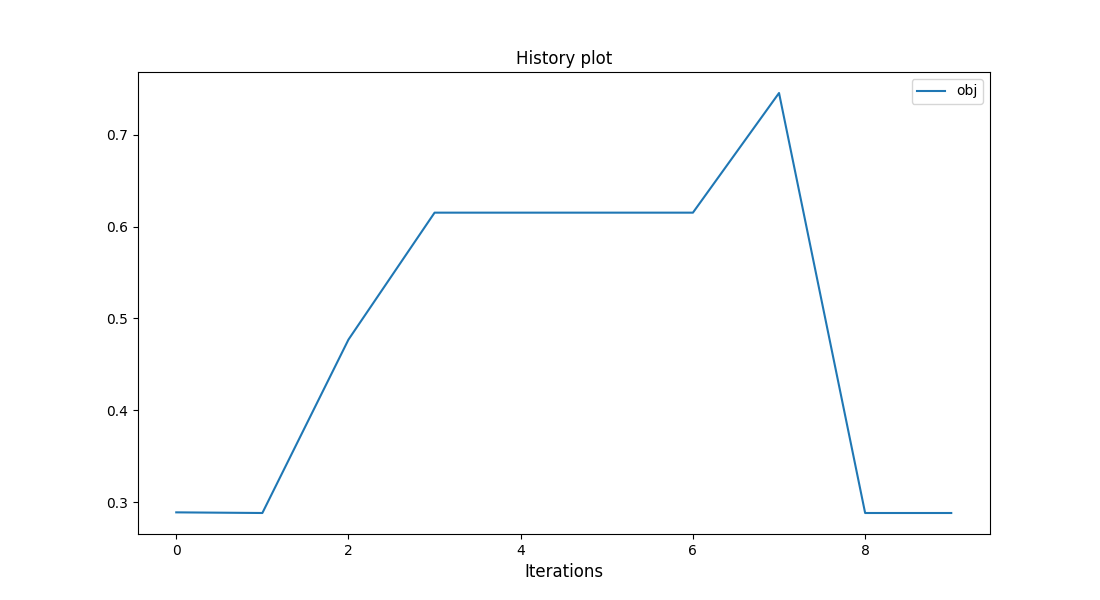

Plot the optimum objective for different x0¶

scenario_doe.post_process("BasicHistory", data_list=["obj"], save=False, show=True)

Out:

<gemseo.post.basic_history.BasicHistory object at 0x7fc29d9e7760>

Total running time of the script: ( 0 minutes 0.611 seconds)