Note

Click here to download the full example code

Calibration of a polynomial regression¶

import matplotlib.pyplot as plt

from gemseo.algos.design_space import DesignSpace

from gemseo.api import configure_logger

from gemseo.mlearning.core.calibration import MLAlgoCalibration

from gemseo.mlearning.qual_measure.mse_measure import MSEMeasure

from gemseo.problems.dataset.rosenbrock import RosenbrockDataset

from matplotlib.tri import Triangulation

Load the dataset¶

dataset = RosenbrockDataset(opt_naming=False, n_samples=25)

Define the measure¶

configure_logger()

test_dataset = RosenbrockDataset(opt_naming=False)

measure_options = {"method": "test", "test_data": test_dataset}

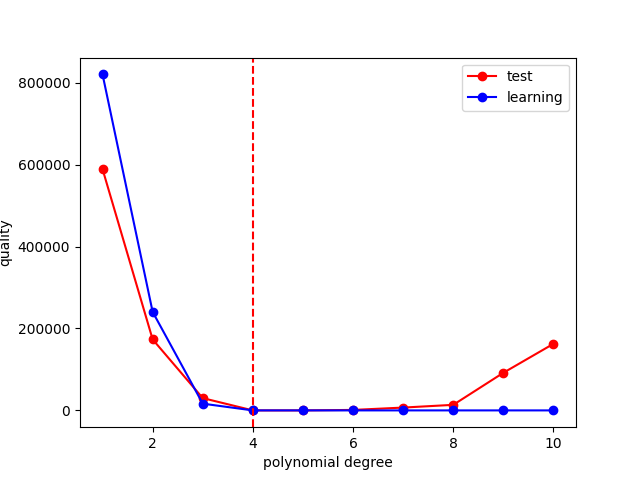

Calibrate the degree of the polynomial regression¶

Define and execute the calibration¶

calibration_space = DesignSpace()

calibration_space.add_variable("degree", 1, "integer", 1, 10, 1)

calibration = MLAlgoCalibration(

"PolynomialRegressor",

dataset,

["degree"],

calibration_space,

MSEMeasure,

measure_options,

)

calibration.execute({"algo": "fullfact", "n_samples": 10})

x_opt = calibration.optimal_parameters

f_opt = calibration.optimal_criterion

print("optimal degree:", x_opt["degree"][0])

print("optimal criterion:", f_opt)

Out:

INFO - 07:18:28:

INFO - 07:18:28: *** Start DOEScenario execution ***

INFO - 07:18:28: DOEScenario

INFO - 07:18:28: Disciplines: MLAlgoAssessor

INFO - 07:18:28: MDO formulation: DisciplinaryOpt

INFO - 07:18:28: Optimization problem:

INFO - 07:18:28: minimize criterion(degree)

INFO - 07:18:28: with respect to degree

INFO - 07:18:28: over the design space:

INFO - 07:18:28: +--------+-------------+-------+-------------+---------+

INFO - 07:18:28: | name | lower_bound | value | upper_bound | type |

INFO - 07:18:28: +--------+-------------+-------+-------------+---------+

INFO - 07:18:28: | degree | 1 | 1 | 10 | integer |

INFO - 07:18:28: +--------+-------------+-------+-------------+---------+

INFO - 07:18:28: Solving optimization problem with algorithm fullfact:

INFO - 07:18:28: Full factorial design required. Number of samples along each direction for a design vector of size 1 with 10 samples: 10

INFO - 07:18:28: Final number of samples for DOE = 10 vs 10 requested

INFO - 07:18:28: ... 0%| | 0/10 [00:00<?, ?it]

INFO - 07:18:28: ... 100%|██████████| 10/10 [00:00<00:00, 292.20 it/sec, obj=1.63e+5]

INFO - 07:18:28: Optimization result:

INFO - 07:18:28: Optimizer info:

INFO - 07:18:28: Status: None

INFO - 07:18:28: Message: None

INFO - 07:18:28: Number of calls to the objective function by the optimizer: 10

INFO - 07:18:28: Solution:

INFO - 07:18:28: Objective: 1.0957626812742524e-24

INFO - 07:18:28: Design space:

INFO - 07:18:28: +--------+-------------+-------+-------------+---------+

INFO - 07:18:28: | name | lower_bound | value | upper_bound | type |

INFO - 07:18:28: +--------+-------------+-------+-------------+---------+

INFO - 07:18:28: | degree | 1 | 4 | 10 | integer |

INFO - 07:18:28: +--------+-------------+-------+-------------+---------+

INFO - 07:18:28: *** End DOEScenario execution (time: 0:00:00.042904) ***

optimal degree: 4

optimal criterion: 1.0957626812742524e-24

Get the history¶

print(calibration.dataset.export_to_dataframe())

Out:

outputs inputs outputs

criterion degree learning

0 0 0

0 5.888317e+05 1.0 8.200828e+05

1 1.732475e+05 2.0 2.404571e+05

2 3.001292e+04 3.0 1.645714e+04

3 1.095763e-24 4.0 1.703801e-24

4 1.097877e-01 5.0 1.391092e-23

5 1.183264e+03 6.0 2.332471e-24

6 6.895919e+03 7.0 1.401963e-23

7 1.356307e+04 8.0 5.192192e-23

8 9.180547e+04 9.0 8.964290e-23

9 1.625259e+05 10.0 8.767875e-23

Visualize the results¶

degree = calibration.get_history("degree")

criterion = calibration.get_history("criterion")

learning = calibration.get_history("learning")

plt.plot(degree, criterion, "-o", label="test", color="red")

plt.plot(degree, learning, "-o", label="learning", color="blue")

plt.xlabel("polynomial degree")

plt.ylabel("quality")

plt.axvline(x_opt["degree"], color="red", ls="--")

plt.legend()

plt.show()

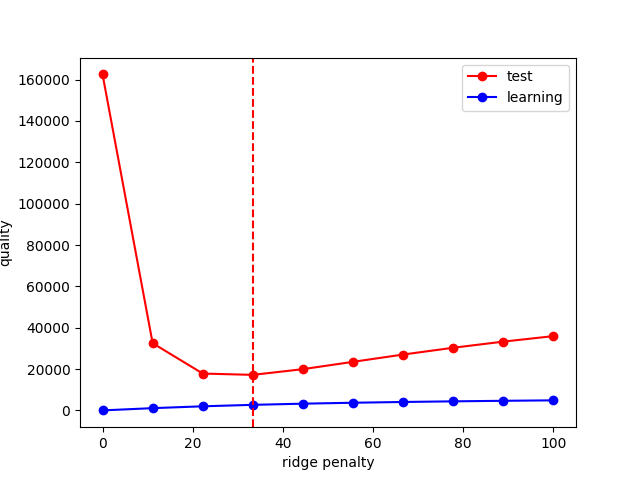

Calibrate the ridge penalty of the polynomial regression¶

Define and execute the calibration¶

calibration_space = DesignSpace()

calibration_space.add_variable("penalty_level", 1, "float", 0.0, 100.0, 0.0)

calibration = MLAlgoCalibration(

"PolynomialRegressor",

dataset,

["penalty_level"],

calibration_space,

MSEMeasure,

measure_options,

degree=10,

)

calibration.execute({"algo": "fullfact", "n_samples": 10})

x_opt = calibration.optimal_parameters

f_opt = calibration.optimal_criterion

print("optimal penalty_level:", x_opt["penalty_level"][0])

print("optimal criterion:", f_opt)

Out:

INFO - 07:18:28:

INFO - 07:18:28: *** Start DOEScenario execution ***

INFO - 07:18:28: DOEScenario

INFO - 07:18:28: Disciplines: MLAlgoAssessor

INFO - 07:18:28: MDO formulation: DisciplinaryOpt

INFO - 07:18:28: Optimization problem:

INFO - 07:18:28: minimize criterion(penalty_level)

INFO - 07:18:28: with respect to penalty_level

INFO - 07:18:28: over the design space:

INFO - 07:18:28: +---------------+-------------+-------+-------------+-------+

INFO - 07:18:28: | name | lower_bound | value | upper_bound | type |

INFO - 07:18:28: +---------------+-------------+-------+-------------+-------+

INFO - 07:18:28: | penalty_level | 0 | 0 | 100 | float |

INFO - 07:18:28: +---------------+-------------+-------+-------------+-------+

INFO - 07:18:28: Solving optimization problem with algorithm fullfact:

INFO - 07:18:28: Full factorial design required. Number of samples along each direction for a design vector of size 1 with 10 samples: 10

INFO - 07:18:28: Final number of samples for DOE = 10 vs 10 requested

INFO - 07:18:28: ... 0%| | 0/10 [00:00<?, ?it]

INFO - 07:18:29: ... 100%|██████████| 10/10 [00:00<00:00, 312.12 it/sec, obj=3.59e+4]

INFO - 07:18:29: Optimization result:

INFO - 07:18:29: Optimizer info:

INFO - 07:18:29: Status: None

INFO - 07:18:29: Message: None

INFO - 07:18:29: Number of calls to the objective function by the optimizer: 10

INFO - 07:18:29: Solution:

INFO - 07:18:29: Objective: 17189.52649297074

INFO - 07:18:29: Design space:

INFO - 07:18:29: +---------------+-------------+-------------------+-------------+-------+

INFO - 07:18:29: | name | lower_bound | value | upper_bound | type |

INFO - 07:18:29: +---------------+-------------+-------------------+-------------+-------+

INFO - 07:18:29: | penalty_level | 0 | 33.33333333333333 | 100 | float |

INFO - 07:18:29: +---------------+-------------+-------------------+-------------+-------+

INFO - 07:18:29: *** End DOEScenario execution (time: 0:00:00.040575) ***

optimal penalty_level: 33.33333333333333

optimal criterion: 17189.52649297074

Get the history¶

print(calibration.dataset.export_to_dataframe())

Out:

outputs inputs

criterion learning penalty_level

0 0 0

0 162525.860760 8.767875e-23 0.000000

1 32506.221289 1.087801e+03 11.111111

2 17820.599507 1.982580e+03 22.222222

3 17189.526493 2.690007e+03 33.333333

4 19953.420378 3.251453e+03 44.444444

5 23493.269988 3.703714e+03 55.555556

6 27024.053276 4.074147e+03 66.666667

7 30303.486633 4.382362e+03 77.777778

8 33272.062306 4.642448e+03 88.888889

9 35934.745536 4.864667e+03 100.000000

Visualize the results¶

penalty_level = calibration.get_history("penalty_level")

criterion = calibration.get_history("criterion")

learning = calibration.get_history("learning")

plt.plot(penalty_level, criterion, "-o", label="test", color="red")

plt.plot(penalty_level, learning, "-o", label="learning", color="blue")

plt.axvline(x_opt["penalty_level"], color="red", ls="--")

plt.xlabel("ridge penalty")

plt.ylabel("quality")

plt.legend()

plt.show()

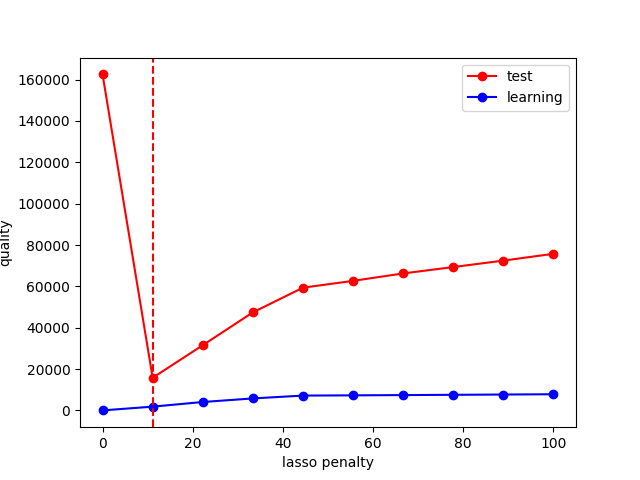

Calibrate the lasso penalty of the polynomial regression¶

Define and execute the calibration¶

calibration_space = DesignSpace()

calibration_space.add_variable("penalty_level", 1, "float", 0.0, 100.0, 0.0)

calibration = MLAlgoCalibration(

"PolynomialRegressor",

dataset,

["penalty_level"],

calibration_space,

MSEMeasure,

measure_options,

degree=10,

l2_penalty_ratio=0.0,

)

calibration.execute({"algo": "fullfact", "n_samples": 10})

x_opt = calibration.optimal_parameters

f_opt = calibration.optimal_criterion

print("optimal penalty_level:", x_opt["penalty_level"][0])

print("optimal criterion:", f_opt)

Out:

INFO - 07:18:29:

INFO - 07:18:29: *** Start DOEScenario execution ***

INFO - 07:18:29: DOEScenario

INFO - 07:18:29: Disciplines: MLAlgoAssessor

INFO - 07:18:29: MDO formulation: DisciplinaryOpt

INFO - 07:18:29: Optimization problem:

INFO - 07:18:29: minimize criterion(penalty_level)

INFO - 07:18:29: with respect to penalty_level

INFO - 07:18:29: over the design space:

INFO - 07:18:29: +---------------+-------------+-------+-------------+-------+

INFO - 07:18:29: | name | lower_bound | value | upper_bound | type |

INFO - 07:18:29: +---------------+-------------+-------+-------------+-------+

INFO - 07:18:29: | penalty_level | 0 | 0 | 100 | float |

INFO - 07:18:29: +---------------+-------------+-------+-------------+-------+

INFO - 07:18:29: Solving optimization problem with algorithm fullfact:

INFO - 07:18:29: Full factorial design required. Number of samples along each direction for a design vector of size 1 with 10 samples: 10

INFO - 07:18:29: Final number of samples for DOE = 10 vs 10 requested

INFO - 07:18:29: ... 0%| | 0/10 [00:00<?, ?it]

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 5.301e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 4.631e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 2.284e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 1.984e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 1.054e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 1.152e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 9.925e+03, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.746e+03, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 6.372e+03, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

INFO - 07:18:29: ... 100%|██████████| 10/10 [00:00<00:00, 157.01 it/sec, obj=7.57e+4]

INFO - 07:18:29: Optimization result:

INFO - 07:18:29: Optimizer info:

INFO - 07:18:29: Status: None

INFO - 07:18:29: Message: None

INFO - 07:18:29: Number of calls to the objective function by the optimizer: 10

INFO - 07:18:29: Solution:

INFO - 07:18:29: Objective: 15775.989581125898

INFO - 07:18:29: Design space:

INFO - 07:18:29: +---------------+-------------+-------------------+-------------+-------+

INFO - 07:18:29: | name | lower_bound | value | upper_bound | type |

INFO - 07:18:29: +---------------+-------------+-------------------+-------------+-------+

INFO - 07:18:29: | penalty_level | 0 | 11.11111111111111 | 100 | float |

INFO - 07:18:29: +---------------+-------------+-------------------+-------------+-------+

INFO - 07:18:29: *** End DOEScenario execution (time: 0:00:00.072221) ***

optimal penalty_level: 11.11111111111111

optimal criterion: 15775.989581125898

Get the history¶

print(calibration.dataset.export_to_dataframe())

Out:

outputs inputs

criterion learning penalty_level

0 0 0

0 162525.860760 8.767875e-23 0.000000

1 15775.989581 1.814382e+03 11.111111

2 31529.584354 4.057302e+03 22.222222

3 47420.249503 5.792299e+03 33.333333

4 59358.207437 7.169565e+03 44.444444

5 62656.171431 7.278397e+03 55.555556

6 66256.259889 7.410137e+03 66.666667

7 69336.190346 7.540731e+03 77.777778

8 72457.378777 7.675963e+03 88.888889

9 75749.793494 7.816545e+03 100.000000

Visualize the results¶

penalty_level = calibration.get_history("penalty_level")

criterion = calibration.get_history("criterion")

learning = calibration.get_history("learning")

plt.plot(penalty_level, criterion, "-o", label="test", color="red")

plt.plot(penalty_level, learning, "-o", label="learning", color="blue")

plt.axvline(x_opt["penalty_level"], color="red", ls="--")

plt.xlabel("lasso penalty")

plt.ylabel("quality")

plt.legend()

plt.show()

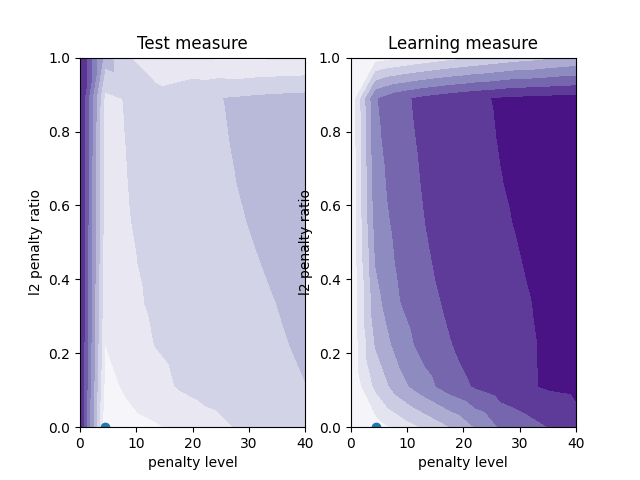

Calibrate the elasticnet penalty of the polynomial regression¶

Define and execute the calibration¶

calibration_space = DesignSpace()

calibration_space.add_variable("penalty_level", 1, "float", 0.0, 40.0, 0.0)

calibration_space.add_variable("l2_penalty_ratio", 1, "float", 0.0, 1.0, 0.5)

calibration = MLAlgoCalibration(

"PolynomialRegressor",

dataset,

["penalty_level", "l2_penalty_ratio"],

calibration_space,

MSEMeasure,

measure_options,

degree=10,

)

calibration.execute({"algo": "fullfact", "n_samples": 100})

x_opt = calibration.optimal_parameters

f_opt = calibration.optimal_criterion

print("optimal penalty_level:", x_opt["penalty_level"][0])

print("optimal l2_penalty_ratio:", x_opt["l2_penalty_ratio"][0])

print("optimal criterion:", f_opt)

Out:

INFO - 07:18:29:

INFO - 07:18:29: *** Start DOEScenario execution ***

INFO - 07:18:29: DOEScenario

INFO - 07:18:29: Disciplines: MLAlgoAssessor

INFO - 07:18:29: MDO formulation: DisciplinaryOpt

INFO - 07:18:29: Optimization problem:

INFO - 07:18:29: minimize criterion(penalty_level, l2_penalty_ratio)

INFO - 07:18:29: with respect to l2_penalty_ratio, penalty_level

INFO - 07:18:29: over the design space:

INFO - 07:18:29: +------------------+-------------+-------+-------------+-------+

INFO - 07:18:29: | name | lower_bound | value | upper_bound | type |

INFO - 07:18:29: +------------------+-------------+-------+-------------+-------+

INFO - 07:18:29: | penalty_level | 0 | 0 | 40 | float |

INFO - 07:18:29: | l2_penalty_ratio | 0 | 0.5 | 1 | float |

INFO - 07:18:29: +------------------+-------------+-------+-------------+-------+

INFO - 07:18:29: Solving optimization problem with algorithm fullfact:

INFO - 07:18:29: Full factorial design required. Number of samples along each direction for a design vector of size 2 with 100 samples: 10

INFO - 07:18:29: Final number of samples for DOE = 100 vs 100 requested

INFO - 07:18:29: ... 0%| | 0/100 [00:00<?, ?it]

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 2.904e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 1.786e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 2.325e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 4.034e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 4.631e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 4.166e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 1.968e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 2.545e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 2.811e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 5.233e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 6.032e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 4.991e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 5.326e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 5.100e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

INFO - 07:18:29: ... 16%|█▌ | 16/100 [00:00<00:00, 941.31 it/sec, obj=4.76e+4]

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 4.636e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 4.011e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 4.376e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 3.274e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 5.416e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.049e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.114e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 6.879e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 6.346e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 5.139e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 3.088e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 2.614e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 2.419e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 6.445e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

INFO - 07:18:29: ... 32%|███▏ | 32/100 [00:00<00:00, 473.43 it/sec, obj=2.43e+4]

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.489e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.720e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.355e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 5.901e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 4.912e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 4.813e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 4.575e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 4.228e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.024e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.011e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.113e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.519e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 6.055e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 6.144e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 6.279e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

INFO - 07:18:29: ... 48%|████▊ | 48/100 [00:00<00:00, 311.51 it/sec, obj=5.93e+4]

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 6.108e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.238e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.431e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.364e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.413e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.943e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.337e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.369e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.650e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.892e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.651e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.740e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.649e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

INFO - 07:18:29: ... 63%|██████▎ | 63/100 [00:00<00:00, 237.23 it/sec, obj=4.07e+4]

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.844e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.984e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.131e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.602e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.732e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 9.134e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.496e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.000e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.897e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 9.148e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.706e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 9.195e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 9.466e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 9.580e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

INFO - 07:18:29: ... 78%|███████▊ | 78/100 [00:00<00:00, 189.66 it/sec, obj=6.32e+4]

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 9.258e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.020e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.222e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 9.179e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 9.446e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 9.507e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 9.936e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 1.005e+05, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 1.010e+05, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 9.877e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 9.866e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

INFO - 07:18:30: ... 96%|█████████▌| 96/100 [00:00<00:00, 158.71 it/sec, obj=1.78e+4]

INFO - 07:18:30: ... 100%|██████████| 100/100 [00:00<00:00, 155.76 it/sec, obj=1.87e+4]

INFO - 07:18:30: Optimization result:

INFO - 07:18:30: Optimizer info:

INFO - 07:18:30: Status: None

INFO - 07:18:30: Message: None

INFO - 07:18:30: Number of calls to the objective function by the optimizer: 100

INFO - 07:18:30: Solution:

INFO - 07:18:30: Objective: 4136.820826715568

INFO - 07:18:30: Design space:

INFO - 07:18:30: +------------------+-------------+-------------------+-------------+-------+

INFO - 07:18:30: | name | lower_bound | value | upper_bound | type |

INFO - 07:18:30: +------------------+-------------+-------------------+-------------+-------+

INFO - 07:18:30: | penalty_level | 0 | 4.444444444444445 | 40 | float |

INFO - 07:18:30: | l2_penalty_ratio | 0 | 0 | 1 | float |

INFO - 07:18:30: +------------------+-------------+-------------------+-------------+-------+

INFO - 07:18:30: *** End DOEScenario execution (time: 0:00:00.651325) ***

optimal penalty_level: 4.444444444444445

optimal l2_penalty_ratio: 0.0

optimal criterion: 4136.820826715568

Get the history¶

print(calibration.dataset.export_to_dataframe())

Out:

outputs inputs outputs inputs

criterion l2_penalty_ratio learning penalty_level

0 0 0 0

0 162525.860760 0.0 8.767875e-23 0.000000

1 4136.820827 0.0 4.546714e+02 4.444444

2 13371.034446 0.0 1.375915e+03 8.888889

3 17860.819693 0.0 2.176736e+03 13.333333

4 23914.366014 0.0 3.005032e+03 17.777778

.. ... ... ... ...

95 17820.599507 1.0 1.982580e+03 22.222222

96 16816.595592 1.0 2.285780e+03 26.666667

97 16894.821607 1.0 2.561538e+03 31.111111

98 17602.178769 1.0 2.812662e+03 35.555556

99 18674.751406 1.0 3.041823e+03 40.000000

[100 rows x 4 columns]

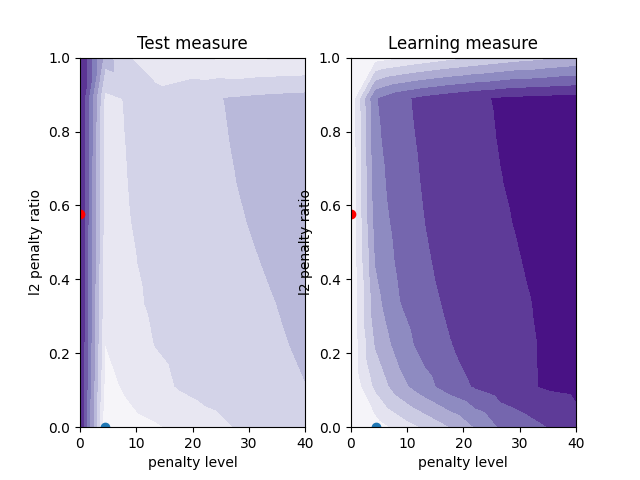

Visualize the results¶

penalty_level = calibration.get_history("penalty_level").flatten()

l2_penalty_ratio = calibration.get_history("l2_penalty_ratio").flatten()

criterion = calibration.get_history("criterion").flatten()

learning = calibration.get_history("learning").flatten()

triang = Triangulation(penalty_level, l2_penalty_ratio)

fig = plt.figure()

ax = fig.add_subplot(1, 2, 1)

ax.tricontourf(triang, criterion, cmap="Purples")

ax.scatter(x_opt["penalty_level"][0], x_opt["l2_penalty_ratio"][0])

ax.set_xlabel("penalty level")

ax.set_ylabel("l2 penalty ratio")

ax.set_title("Test measure")

ax = fig.add_subplot(1, 2, 2)

ax.tricontourf(triang, learning, cmap="Purples")

ax.scatter(x_opt["penalty_level"][0], x_opt["l2_penalty_ratio"][0])

ax.set_xlabel("penalty level")

ax.set_ylabel("l2 penalty ratio")

ax.set_title("Learning measure")

plt.show()

Add an optimization stage¶

calibration_space = DesignSpace()

calibration_space.add_variable("penalty_level", 1, "float", 0.0, 40.0, 0.0)

calibration_space.add_variable("l2_penalty_ratio", 1, "float", 0.0, 1.0, 0.5)

calibration = MLAlgoCalibration(

"PolynomialRegressor",

dataset,

["penalty_level", "l2_penalty_ratio"],

calibration_space,

MSEMeasure,

measure_options,

degree=10,

)

calibration.execute({"algo": "NLOPT_COBYLA", "max_iter": 100})

x_opt2 = calibration.optimal_parameters

f_opt2 = calibration.optimal_criterion

fig = plt.figure()

ax = fig.add_subplot(1, 2, 1)

ax.tricontourf(triang, criterion, cmap="Purples")

ax.scatter(x_opt["penalty_level"][0], x_opt["l2_penalty_ratio"][0])

ax.scatter(x_opt2["penalty_level"][0], x_opt2["l2_penalty_ratio"][0], color="red")

ax.set_xlabel("penalty level")

ax.set_ylabel("l2 penalty ratio")

ax.set_title("Test measure")

ax = fig.add_subplot(1, 2, 2)

ax.tricontourf(triang, learning, cmap="Purples")

ax.scatter(x_opt["penalty_level"][0], x_opt["l2_penalty_ratio"][0])

ax.scatter(x_opt2["penalty_level"][0], x_opt2["l2_penalty_ratio"][0], color="red")

ax.set_xlabel("penalty level")

ax.set_ylabel("l2 penalty ratio")

ax.set_title("Learning measure")

plt.show()

n_iterations = len(calibration.scenario.disciplines[0].cache)

print(f"MSE with DOE: {f_opt} (100 evaluations)")

print(f"MSE with OPT: {f_opt2} ({n_iterations} evaluations)")

print(f"MSE reduction:{round((f_opt2 - f_opt) / f_opt * 100)}%")

Out:

INFO - 07:18:30:

INFO - 07:18:30: *** Start MDOScenario execution ***

INFO - 07:18:30: MDOScenario

INFO - 07:18:30: Disciplines: MLAlgoAssessor

INFO - 07:18:30: MDO formulation: DisciplinaryOpt

INFO - 07:18:30: Optimization problem:

INFO - 07:18:30: minimize criterion(penalty_level, l2_penalty_ratio)

INFO - 07:18:30: with respect to l2_penalty_ratio, penalty_level

INFO - 07:18:30: over the design space:

INFO - 07:18:30: +------------------+-------------+-------+-------------+-------+

INFO - 07:18:30: | name | lower_bound | value | upper_bound | type |

INFO - 07:18:30: +------------------+-------------+-------+-------------+-------+

INFO - 07:18:30: | penalty_level | 0 | 0 | 40 | float |

INFO - 07:18:30: | l2_penalty_ratio | 0 | 0.5 | 1 | float |

INFO - 07:18:30: +------------------+-------------+-------+-------------+-------+

INFO - 07:18:30: Solving optimization problem with algorithm NLOPT_COBYLA:

INFO - 07:18:30: ... 0%| | 0/100 [00:00<?, ?it]

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.287e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.952e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.118e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 8.089e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.973e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 7.402e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 6.059e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 1.428e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.0.0/lib/python3.9/site-packages/sklearn/linear_model/_coordinate_descent.py:648: ConvergenceWarning: Objective did not converge. You might want to increase the number of iterations, check the scale of the features or consider increasing regularisation. Duality gap: 2.976e+04, tolerance: 2.850e+03

model = cd_fast.enet_coordinate_descent(

INFO - 07:18:30: ... 15%|█▌ | 15/100 [00:00<00:00, 964.25 it/sec, obj=493]

INFO - 07:18:30: ... 16%|█▌ | 16/100 [00:00<00:00, 906.74 it/sec, obj=493]

INFO - 07:18:30: Optimization result:

INFO - 07:18:30: Optimizer info:

INFO - 07:18:30: Status: None

INFO - 07:18:30: Message: Successive iterates of the objective function are closer than ftol_rel or ftol_abs. GEMSEO Stopped the driver

INFO - 07:18:30: Number of calls to the objective function by the optimizer: 17

INFO - 07:18:30: Solution:

INFO - 07:18:30: Objective: 493.1818200496802

INFO - 07:18:30: Design space:

INFO - 07:18:30: +------------------+-------------+-----------------------+-------------+-------+

INFO - 07:18:30: | name | lower_bound | value | upper_bound | type |

INFO - 07:18:30: +------------------+-------------+-----------------------+-------------+-------+

INFO - 07:18:30: | penalty_level | 0 | 2.289834988289385e-15 | 40 | float |

INFO - 07:18:30: | l2_penalty_ratio | 0 | 0.5765298371174132 | 1 | float |

INFO - 07:18:30: +------------------+-------------+-----------------------+-------------+-------+

INFO - 07:18:30: *** End MDOScenario execution (time: 0:00:00.120341) ***

MSE with DOE: 4136.820826715568 (100 evaluations)

MSE with OPT: 493.1818200496802 (2 evaluations)

MSE reduction:-88%

Total running time of the script: ( 0 minutes 1.770 seconds)