Note

Go to the end to download the full example code.

Gaussian process (GP) regression#

A GaussianProcessRegressor is a GP regression model

based on scikit-learn.

See also

You can find more information about building GP models with scikit-learn on this page.

from __future__ import annotations

from matplotlib import pyplot as plt

from numpy import array

from sklearn.gaussian_process.kernels import RBF

from sklearn.gaussian_process.kernels import Matern

from gemseo import create_design_space

from gemseo import create_discipline

from gemseo import sample_disciplines

from gemseo.mlearning import create_regression_model

Problem#

In this example,

we represent the function \(f(x)=(6x-2)^2\sin(12x-4)\) [FSK08]

by the AnalyticDiscipline

discipline = create_discipline(

"AnalyticDiscipline",

name="f",

expressions={"y": "(6*x-2)**2*sin(12*x-4)"},

)

and seek to approximate it over the input space

input_space = create_design_space()

input_space.add_variable("x", lower_bound=0.0, upper_bound=1.0)

To do this, we create a training dataset with 6 equispaced points:

training_dataset = sample_disciplines(

[discipline], input_space, "y", algo_name="PYDOE_FULLFACT", n_samples=6

)

INFO - 16:21:44: *** Start Sampling execution ***

INFO - 16:21:44: Sampling

INFO - 16:21:44: Disciplines: f

INFO - 16:21:44: MDO formulation: MDF

INFO - 16:21:44: Running the algorithm PYDOE_FULLFACT:

INFO - 16:21:44: 17%|█▋ | 1/6 [00:00<00:00, 628.27 it/sec]

INFO - 16:21:44: 33%|███▎ | 2/6 [00:00<00:00, 1023.63 it/sec]

INFO - 16:21:44: 50%|█████ | 3/6 [00:00<00:00, 1351.26 it/sec]

INFO - 16:21:44: 67%|██████▋ | 4/6 [00:00<00:00, 1618.80 it/sec]

INFO - 16:21:44: 83%|████████▎ | 5/6 [00:00<00:00, 1831.73 it/sec]

INFO - 16:21:44: 100%|██████████| 6/6 [00:00<00:00, 1971.32 it/sec]

INFO - 16:21:44: *** End Sampling execution ***

Basics#

Training#

Then, we train a GP regression model from these samples:

model = create_regression_model("GaussianProcessRegressor", training_dataset)

model.learn()

Prediction#

Once it is built, we can predict the output value of \(f\) at a new input point:

input_value = {"x": array([0.65])}

output_value = model.predict(input_value)

output_value

{'y': array([2.20380214])}

but cannot predict its Jacobian value:

try:

model.predict_jacobian(input_value)

except NotImplementedError:

print("The derivatives are not available for GaussianProcessRegressor.")

The derivatives are not available for GaussianProcessRegressor.

Uncertainty#

GP models are often valued for their ability to provide model uncertainty. Indeed, a GP model is a random process fully characterized by its mean function and a covariance structure. Given an input point \(x\), the prediction is equal to the mean at \(x\) and the uncertainty is equal to the standard deviation at \(x\):

standard_deviation = model.predict_std(input_value)

standard_deviation

array([[0.3140468]])

Plotting#

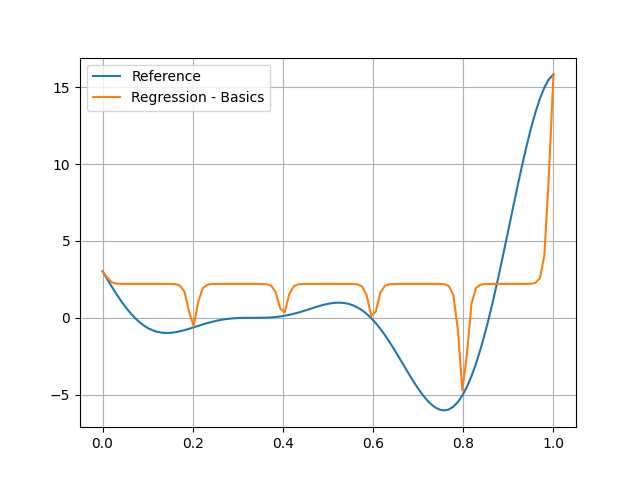

You can see that the GP model interpolates the training points but is very bad elsewhere. This case-dependent problem is due to poor auto-tuning of these length scales. We will look at how to correct this next.

test_dataset = sample_disciplines(

[discipline], input_space, "y", algo_name="PYDOE_FULLFACT", n_samples=100

)

input_data = test_dataset.get_view(variable_names=model.input_names).to_numpy()

reference_output_data = test_dataset.get_view(variable_names="y").to_numpy().ravel()

predicted_output_data = model.predict(input_data).ravel()

plt.plot(input_data.ravel(), reference_output_data, label="Reference")

plt.plot(input_data.ravel(), predicted_output_data, label="Regression - Basics")

plt.grid()

plt.legend()

plt.show()

INFO - 16:21:44: *** Start Sampling execution ***

INFO - 16:21:44: Sampling

INFO - 16:21:44: Disciplines: f

INFO - 16:21:44: MDO formulation: MDF

INFO - 16:21:44: Running the algorithm PYDOE_FULLFACT:

INFO - 16:21:44: 1%| | 1/100 [00:00<00:00, 3908.95 it/sec]

INFO - 16:21:44: 2%|▏ | 2/100 [00:00<00:00, 3695.42 it/sec]

INFO - 16:21:44: 3%|▎ | 3/100 [00:00<00:00, 3791.18 it/sec]

INFO - 16:21:44: 4%|▍ | 4/100 [00:00<00:00, 3886.31 it/sec]

INFO - 16:21:44: 5%|▌ | 5/100 [00:00<00:00, 3875.00 it/sec]

INFO - 16:21:44: 6%|▌ | 6/100 [00:00<00:00, 3913.21 it/sec]

INFO - 16:21:44: 7%|▋ | 7/100 [00:00<00:00, 3961.16 it/sec]

INFO - 16:21:44: 8%|▊ | 8/100 [00:00<00:00, 4002.20 it/sec]

INFO - 16:21:44: 9%|▉ | 9/100 [00:00<00:00, 4006.02 it/sec]

INFO - 16:21:44: 10%|█ | 10/100 [00:00<00:00, 4023.31 it/sec]

INFO - 16:21:44: 11%|█ | 11/100 [00:00<00:00, 4044.65 it/sec]

INFO - 16:21:44: 12%|█▏ | 12/100 [00:00<00:00, 4071.15 it/sec]

INFO - 16:21:44: 13%|█▎ | 13/100 [00:00<00:00, 4087.71 it/sec]

INFO - 16:21:44: 14%|█▍ | 14/100 [00:00<00:00, 4068.19 it/sec]

INFO - 16:21:44: 15%|█▌ | 15/100 [00:00<00:00, 4089.34 it/sec]

INFO - 16:21:44: 16%|█▌ | 16/100 [00:00<00:00, 4100.00 it/sec]

INFO - 16:21:44: 17%|█▋ | 17/100 [00:00<00:00, 4121.33 it/sec]

INFO - 16:21:44: 18%|█▊ | 18/100 [00:00<00:00, 4120.14 it/sec]

INFO - 16:21:44: 19%|█▉ | 19/100 [00:00<00:00, 4135.32 it/sec]

INFO - 16:21:44: 20%|██ | 20/100 [00:00<00:00, 4152.57 it/sec]

INFO - 16:21:44: 21%|██ | 21/100 [00:00<00:00, 4166.33 it/sec]

INFO - 16:21:44: 22%|██▏ | 22/100 [00:00<00:00, 4163.83 it/sec]

INFO - 16:21:44: 23%|██▎ | 23/100 [00:00<00:00, 4173.80 it/sec]

INFO - 16:21:44: 24%|██▍ | 24/100 [00:00<00:00, 4187.15 it/sec]

INFO - 16:21:44: 25%|██▌ | 25/100 [00:00<00:00, 4201.70 it/sec]

INFO - 16:21:44: 26%|██▌ | 26/100 [00:00<00:00, 4213.59 it/sec]

INFO - 16:21:44: 27%|██▋ | 27/100 [00:00<00:00, 4205.83 it/sec]

INFO - 16:21:44: 28%|██▊ | 28/100 [00:00<00:00, 4209.64 it/sec]

INFO - 16:21:44: 29%|██▉ | 29/100 [00:00<00:00, 4217.57 it/sec]

INFO - 16:21:44: 30%|███ | 30/100 [00:00<00:00, 4226.00 it/sec]

INFO - 16:21:44: 31%|███ | 31/100 [00:00<00:00, 4223.87 it/sec]

INFO - 16:21:44: 32%|███▏ | 32/100 [00:00<00:00, 4228.13 it/sec]

INFO - 16:21:44: 33%|███▎ | 33/100 [00:00<00:00, 4230.58 it/sec]

INFO - 16:21:44: 34%|███▍ | 34/100 [00:00<00:00, 4235.79 it/sec]

INFO - 16:21:44: 35%|███▌ | 35/100 [00:00<00:00, 4233.49 it/sec]

INFO - 16:21:44: 36%|███▌ | 36/100 [00:00<00:00, 4233.82 it/sec]

INFO - 16:21:44: 37%|███▋ | 37/100 [00:00<00:00, 4240.14 it/sec]

INFO - 16:21:44: 38%|███▊ | 38/100 [00:00<00:00, 4247.06 it/sec]

INFO - 16:21:44: 39%|███▉ | 39/100 [00:00<00:00, 4255.19 it/sec]

INFO - 16:21:44: 40%|████ | 40/100 [00:00<00:00, 4250.62 it/sec]

INFO - 16:21:44: 41%|████ | 41/100 [00:00<00:00, 4215.38 it/sec]

INFO - 16:21:44: 42%|████▏ | 42/100 [00:00<00:00, 4218.01 it/sec]

INFO - 16:21:44: 43%|████▎ | 43/100 [00:00<00:00, 4223.18 it/sec]

INFO - 16:21:44: 44%|████▍ | 44/100 [00:00<00:00, 4218.95 it/sec]

INFO - 16:21:44: 45%|████▌ | 45/100 [00:00<00:00, 4225.01 it/sec]

INFO - 16:21:44: 46%|████▌ | 46/100 [00:00<00:00, 4228.96 it/sec]

INFO - 16:21:44: 47%|████▋ | 47/100 [00:00<00:00, 4233.21 it/sec]

INFO - 16:21:44: 48%|████▊ | 48/100 [00:00<00:00, 4231.33 it/sec]

INFO - 16:21:44: 49%|████▉ | 49/100 [00:00<00:00, 4235.36 it/sec]

INFO - 16:21:44: 50%|█████ | 50/100 [00:00<00:00, 4237.27 it/sec]

INFO - 16:21:44: 51%|█████ | 51/100 [00:00<00:00, 4240.45 it/sec]

INFO - 16:21:44: 52%|█████▏ | 52/100 [00:00<00:00, 4238.48 it/sec]

INFO - 16:21:44: 53%|█████▎ | 53/100 [00:00<00:00, 4242.09 it/sec]

INFO - 16:21:44: 54%|█████▍ | 54/100 [00:00<00:00, 4247.95 it/sec]

INFO - 16:21:44: 55%|█████▌ | 55/100 [00:00<00:00, 4253.47 it/sec]

INFO - 16:21:44: 56%|█████▌ | 56/100 [00:00<00:00, 4258.41 it/sec]

INFO - 16:21:44: 57%|█████▋ | 57/100 [00:00<00:00, 4256.05 it/sec]

INFO - 16:21:44: 58%|█████▊ | 58/100 [00:00<00:00, 4260.49 it/sec]

INFO - 16:21:44: 59%|█████▉ | 59/100 [00:00<00:00, 4265.37 it/sec]

INFO - 16:21:44: 60%|██████ | 60/100 [00:00<00:00, 4270.90 it/sec]

INFO - 16:21:44: 61%|██████ | 61/100 [00:00<00:00, 4270.76 it/sec]

INFO - 16:21:44: 62%|██████▏ | 62/100 [00:00<00:00, 4273.57 it/sec]

INFO - 16:21:44: 63%|██████▎ | 63/100 [00:00<00:00, 4277.13 it/sec]

INFO - 16:21:44: 64%|██████▍ | 64/100 [00:00<00:00, 4279.77 it/sec]

INFO - 16:21:44: 65%|██████▌ | 65/100 [00:00<00:00, 4283.47 it/sec]

INFO - 16:21:44: 66%|██████▌ | 66/100 [00:00<00:00, 4280.83 it/sec]

INFO - 16:21:44: 67%|██████▋ | 67/100 [00:00<00:00, 4283.88 it/sec]

INFO - 16:21:44: 68%|██████▊ | 68/100 [00:00<00:00, 4284.53 it/sec]

INFO - 16:21:44: 69%|██████▉ | 69/100 [00:00<00:00, 4287.57 it/sec]

INFO - 16:21:44: 70%|███████ | 70/100 [00:00<00:00, 4285.02 it/sec]

INFO - 16:21:44: 71%|███████ | 71/100 [00:00<00:00, 4287.17 it/sec]

INFO - 16:21:44: 72%|███████▏ | 72/100 [00:00<00:00, 4290.79 it/sec]

INFO - 16:21:44: 73%|███████▎ | 73/100 [00:00<00:00, 4293.89 it/sec]

INFO - 16:21:44: 74%|███████▍ | 74/100 [00:00<00:00, 4292.87 it/sec]

INFO - 16:21:44: 75%|███████▌ | 75/100 [00:00<00:00, 4294.45 it/sec]

INFO - 16:21:44: 76%|███████▌ | 76/100 [00:00<00:00, 4297.67 it/sec]

INFO - 16:21:44: 77%|███████▋ | 77/100 [00:00<00:00, 4300.42 it/sec]

INFO - 16:21:44: 78%|███████▊ | 78/100 [00:00<00:00, 4304.40 it/sec]

INFO - 16:21:44: 79%|███████▉ | 79/100 [00:00<00:00, 4302.91 it/sec]

INFO - 16:21:44: 80%|████████ | 80/100 [00:00<00:00, 4304.83 it/sec]

INFO - 16:21:44: 81%|████████ | 81/100 [00:00<00:00, 4307.96 it/sec]

INFO - 16:21:44: 82%|████████▏ | 82/100 [00:00<00:00, 4310.48 it/sec]

INFO - 16:21:44: 83%|████████▎ | 83/100 [00:00<00:00, 4306.80 it/sec]

INFO - 16:21:44: 84%|████████▍ | 84/100 [00:00<00:00, 4306.79 it/sec]

INFO - 16:21:44: 85%|████████▌ | 85/100 [00:00<00:00, 4309.29 it/sec]

INFO - 16:21:44: 86%|████████▌ | 86/100 [00:00<00:00, 4309.04 it/sec]

INFO - 16:21:44: 87%|████████▋ | 87/100 [00:00<00:00, 4310.90 it/sec]

INFO - 16:21:44: 88%|████████▊ | 88/100 [00:00<00:00, 4306.87 it/sec]

INFO - 16:21:44: 89%|████████▉ | 89/100 [00:00<00:00, 4309.40 it/sec]

INFO - 16:21:44: 90%|█████████ | 90/100 [00:00<00:00, 4312.17 it/sec]

INFO - 16:21:44: 91%|█████████ | 91/100 [00:00<00:00, 4314.88 it/sec]

INFO - 16:21:44: 92%|█████████▏| 92/100 [00:00<00:00, 4313.34 it/sec]

INFO - 16:21:44: 93%|█████████▎| 93/100 [00:00<00:00, 4314.79 it/sec]

INFO - 16:21:44: 94%|█████████▍| 94/100 [00:00<00:00, 4317.35 it/sec]

INFO - 16:21:44: 95%|█████████▌| 95/100 [00:00<00:00, 4320.27 it/sec]

INFO - 16:21:44: 96%|█████████▌| 96/100 [00:00<00:00, 4319.90 it/sec]

INFO - 16:21:44: 97%|█████████▋| 97/100 [00:00<00:00, 4320.44 it/sec]

INFO - 16:21:44: 98%|█████████▊| 98/100 [00:00<00:00, 4321.75 it/sec]

INFO - 16:21:44: 99%|█████████▉| 99/100 [00:00<00:00, 4323.62 it/sec]

INFO - 16:21:44: 100%|██████████| 100/100 [00:00<00:00, 4273.71 it/sec]

INFO - 16:21:44: *** End Sampling execution ***

Settings#

The GaussianProcessRegressor has many options

defined in the GaussianProcessRegressor_Settings Pydantic model.

Here are the main ones.

Kernel#

The kernel option defines the kernel function

parametrizing the Gaussian process regressor

and must be passed as a scikit-learn object.

The default kernel is the Matérn 5/2 covariance function

with input length scales belonging to the interval \([0.01,100]\),

initialized at 1

and optimized by the L-BFGS-B algorithm.

We can replace this kernel by the Matérn 5/2 kernel

with input length scales fixed at 1:

model = create_regression_model(

"GaussianProcessRegressor",

training_dataset,

kernel=Matern(length_scale=1.0, length_scale_bounds="fixed", nu=2.5),

)

model.learn()

predicted_output_data_1 = model.predict(input_data).ravel()

or a squared exponential covariance kernel with input length scales fixed at 1:

model = create_regression_model(

"GaussianProcessRegressor",

training_dataset,

kernel=RBF(length_scale=1.0, length_scale_bounds="fixed"),

)

model.learn()

predicted_output_data_2 = model.predict(input_data).ravel()

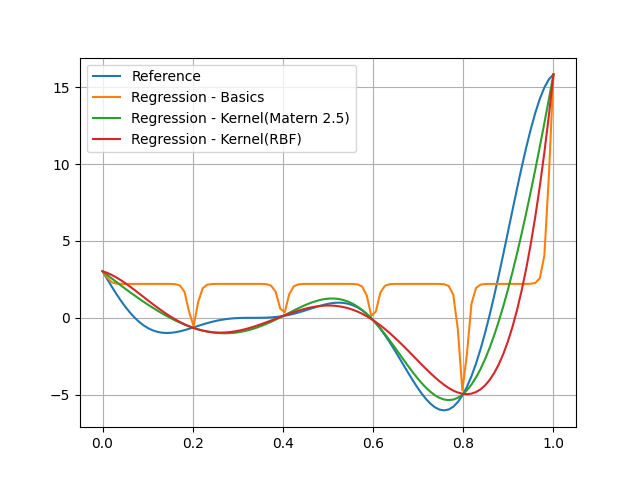

These two models are much better than the previous one, notably the one with the Matérn 5/2 kernel, which highlights that the concern with the initial model is the value of the length scales found by numerical optimization:

plt.plot(input_data.ravel(), reference_output_data, label="Reference")

plt.plot(input_data.ravel(), predicted_output_data, label="Regression - Basics")

plt.plot(

input_data.ravel(), predicted_output_data_1, label="Regression - Kernel(Matern 2.5)"

)

plt.plot(input_data.ravel(), predicted_output_data_2, label="Regression - Kernel(RBF)")

plt.grid()

plt.legend()

plt.show()

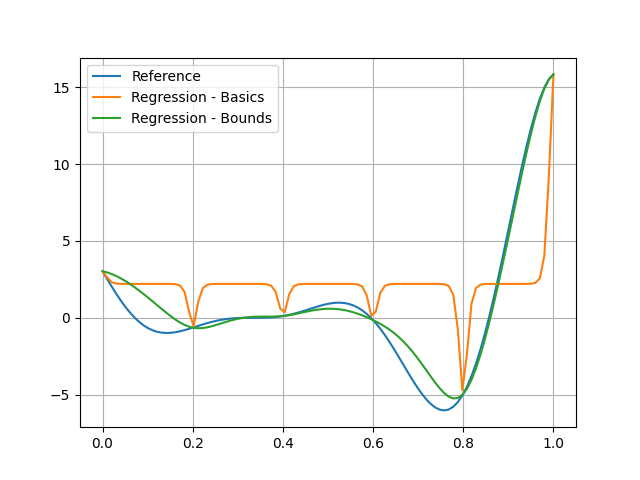

Bounds#

The bounds option defines the bounds of the input length scales;

model = create_regression_model(

"GaussianProcessRegressor", training_dataset, bounds=(1e-1, 1e2)

)

model.learn()

Increasing the lower bounds can facilitate the training as in this example:

predicted_output_data_ = model.predict(input_data).ravel()

plt.plot(input_data.ravel(), reference_output_data, label="Reference")

plt.plot(input_data.ravel(), predicted_output_data, label="Regression - Basics")

plt.plot(input_data.ravel(), predicted_output_data_, label="Regression - Bounds")

plt.grid()

plt.legend()

plt.show()

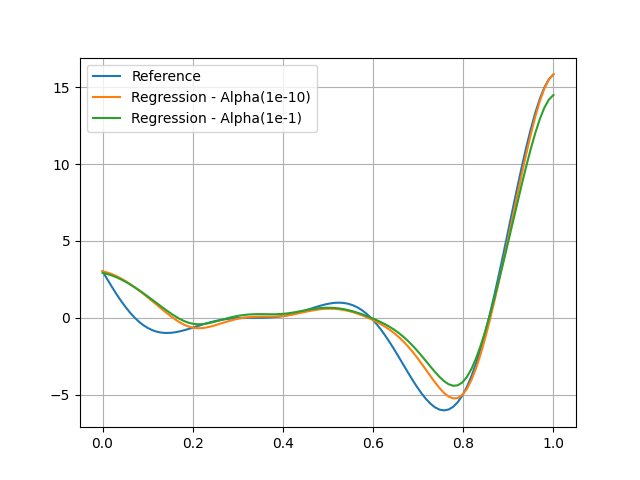

Alpha#

The alpha parameter (default: 1e-10),

often called nugget effect,

is the value added to the diagonal of the training kernel matrix

to avoid overfitting.

When alpha is equal to zero,

the GP model interpolates the training points

at which the standard deviation is equal to zero.

The larger alpha is, the less interpolating the GP model is.

For example, we can increase the value to 0.1:

predicted_output_data_1 = predicted_output_data_

model = create_regression_model(

"GaussianProcessRegressor", training_dataset, bounds=(1e-1, 1e2), alpha=0.1

)

model.learn()

and see that the model moves away from the training points:

predicted_output_data_2 = model.predict(input_data).ravel()

plt.plot(input_data.ravel(), reference_output_data, label="Reference")

plt.plot(input_data.ravel(), predicted_output_data_1, label="Regression - Alpha(1e-10)")

plt.plot(input_data.ravel(), predicted_output_data_2, label="Regression - Alpha(1e-1)")

plt.grid()

plt.legend()

plt.show()

Total running time of the script: (0 minutes 0.297 seconds)