Note

Click here to download the full example code

Quadratic approximations¶

In this example, we illustrate the use of the QuadApprox plot

on the Sobieski’s SSBJ problem.

from gemseo.api import configure_logger

from gemseo.api import create_discipline

from gemseo.api import create_scenario

from gemseo.problems.sobieski.core.problem import SobieskiProblem

from matplotlib import pyplot as plt

Import¶

The first step is to import some functions from the API and a method to get the design space.

configure_logger()

Out:

<RootLogger root (INFO)>

Description¶

The QuadApprox post-processing

performs a quadratic approximation of a given function

from an optimization history

and plot the results as cuts of the approximation.

Create disciplines¶

Then, we instantiate the disciplines of the Sobieski’s SSBJ problem: Propulsion, Aerodynamics, Structure and Mission

disciplines = create_discipline(

[

"SobieskiPropulsion",

"SobieskiAerodynamics",

"SobieskiStructure",

"SobieskiMission",

]

)

Create design space¶

We also read the design space from the SobieskiProblem.

design_space = SobieskiProblem().design_space

Create and execute scenario¶

The next step is to build an MDO scenario in order to maximize the range, encoded ‘y_4’, with respect to the design parameters, while satisfying the inequality constraints ‘g_1’, ‘g_2’ and ‘g_3’. We can use the MDF formulation, the SLSQP optimization algorithm and a maximum number of iterations equal to 100.

scenario = create_scenario(

disciplines,

formulation="MDF",

objective_name="y_4",

maximize_objective=True,

design_space=design_space,

)

scenario.set_differentiation_method("user")

for constraint in ["g_1", "g_2", "g_3"]:

scenario.add_constraint(constraint, "ineq")

scenario.execute({"algo": "SLSQP", "max_iter": 10})

Out:

INFO - 07:15:00:

INFO - 07:15:00: *** Start MDOScenario execution ***

INFO - 07:15:00: MDOScenario

INFO - 07:15:00: Disciplines: SobieskiPropulsion SobieskiAerodynamics SobieskiStructure SobieskiMission

INFO - 07:15:00: MDO formulation: MDF

INFO - 07:15:00: Optimization problem:

INFO - 07:15:00: minimize -y_4(x_shared, x_1, x_2, x_3)

INFO - 07:15:00: with respect to x_1, x_2, x_3, x_shared

INFO - 07:15:00: subject to constraints:

INFO - 07:15:00: g_1(x_shared, x_1, x_2, x_3) <= 0.0

INFO - 07:15:00: g_2(x_shared, x_1, x_2, x_3) <= 0.0

INFO - 07:15:00: g_3(x_shared, x_1, x_2, x_3) <= 0.0

INFO - 07:15:00: over the design space:

INFO - 07:15:00: +----------+-------------+-------+-------------+-------+

INFO - 07:15:00: | name | lower_bound | value | upper_bound | type |

INFO - 07:15:00: +----------+-------------+-------+-------------+-------+

INFO - 07:15:00: | x_shared | 0.01 | 0.05 | 0.09 | float |

INFO - 07:15:00: | x_shared | 30000 | 45000 | 60000 | float |

INFO - 07:15:00: | x_shared | 1.4 | 1.6 | 1.8 | float |

INFO - 07:15:00: | x_shared | 2.5 | 5.5 | 8.5 | float |

INFO - 07:15:00: | x_shared | 40 | 55 | 70 | float |

INFO - 07:15:00: | x_shared | 500 | 1000 | 1500 | float |

INFO - 07:15:00: | x_1 | 0.1 | 0.25 | 0.4 | float |

INFO - 07:15:00: | x_1 | 0.75 | 1 | 1.25 | float |

INFO - 07:15:00: | x_2 | 0.75 | 1 | 1.25 | float |

INFO - 07:15:00: | x_3 | 0.1 | 0.5 | 1 | float |

INFO - 07:15:00: +----------+-------------+-------+-------------+-------+

INFO - 07:15:00: Solving optimization problem with algorithm SLSQP:

INFO - 07:15:00: ... 0%| | 0/10 [00:00<?, ?it]

INFO - 07:15:01: ... 20%|██ | 2/10 [00:00<00:00, 41.08 it/sec, obj=-2.12e+3]

WARNING - 07:15:01: MDAJacobi has reached its maximum number of iterations but the normed residual 1.0259352902248124e-06 is still above the tolerance 1e-06.

INFO - 07:15:01: ... 30%|███ | 3/10 [00:00<00:00, 23.48 it/sec, obj=-3.8e+3]

INFO - 07:15:01: ... 40%|████ | 4/10 [00:00<00:00, 16.96 it/sec, obj=-3.96e+3]

INFO - 07:15:01: ... 50%|█████ | 5/10 [00:00<00:00, 13.29 it/sec, obj=-3.96e+3]

INFO - 07:15:01: ... 60%|██████ | 6/10 [00:00<00:00, 10.95 it/sec, obj=-3.96e+3]

INFO - 07:15:01: ... 70%|███████ | 7/10 [00:01<00:00, 9.44 it/sec, obj=-4.81e+3]

INFO - 07:15:02: ... 90%|█████████ | 9/10 [00:01<00:00, 7.81 it/sec, obj=-3.87e+3]

INFO - 07:15:02: ... 100%|██████████| 10/10 [00:01<00:00, 7.42 it/sec, obj=-4.64e+3]

INFO - 07:15:02: Optimization result:

INFO - 07:15:02: Optimizer info:

INFO - 07:15:02: Status: None

INFO - 07:15:02: Message: Maximum number of iterations reached. GEMSEO Stopped the driver

INFO - 07:15:02: Number of calls to the objective function by the optimizer: 12

INFO - 07:15:02: Solution:

INFO - 07:15:02: The solution is feasible.

INFO - 07:15:02: Objective: -3963.5118239326903

INFO - 07:15:02: Standardized constraints:

INFO - 07:15:02: g_1 = [-0.01808064 -0.03336052 -0.04426042 -0.05184355 -0.05733364 -0.13720861

INFO - 07:15:02: -0.10279139]

INFO - 07:15:02: g_2 = 9.785920617177979e-06

INFO - 07:15:02: g_3 = [-7.67233630e-01 -2.32766370e-01 8.55509121e-05 -1.83255000e-01]

INFO - 07:15:02: Design space:

INFO - 07:15:02: +----------+-------------+---------------------+-------------+-------+

INFO - 07:15:02: | name | lower_bound | value | upper_bound | type |

INFO - 07:15:02: +----------+-------------+---------------------+-------------+-------+

INFO - 07:15:02: | x_shared | 0.01 | 0.06000244648015432 | 0.09 | float |

INFO - 07:15:02: | x_shared | 30000 | 60000 | 60000 | float |

INFO - 07:15:02: | x_shared | 1.4 | 1.4 | 1.8 | float |

INFO - 07:15:02: | x_shared | 2.5 | 2.5 | 8.5 | float |

INFO - 07:15:02: | x_shared | 40 | 70 | 70 | float |

INFO - 07:15:02: | x_shared | 500 | 1500 | 1500 | float |

INFO - 07:15:02: | x_1 | 0.1 | 0.3999997783130735 | 0.4 | float |

INFO - 07:15:02: | x_1 | 0.75 | 0.75 | 1.25 | float |

INFO - 07:15:02: | x_2 | 0.75 | 0.75 | 1.25 | float |

INFO - 07:15:02: | x_3 | 0.1 | 0.1562581125267868 | 1 | float |

INFO - 07:15:02: +----------+-------------+---------------------+-------------+-------+

INFO - 07:15:02: *** End MDOScenario execution (time: 0:00:01.361917) ***

{'max_iter': 10, 'algo': 'SLSQP'}

Post-process scenario¶

Lastly, we post-process the scenario by means of the QuadApprox

plot which performs a quadratic approximation of a given function

from an optimization history and plot the results as cuts of the

approximation.

Tip

Each post-processing method requires different inputs and offers a variety

of customization options. Use the API function

get_post_processing_options_schema() to print a table with

the options for any post-processing algorithm.

Or refer to our dedicated page:

Post-processing algorithms.

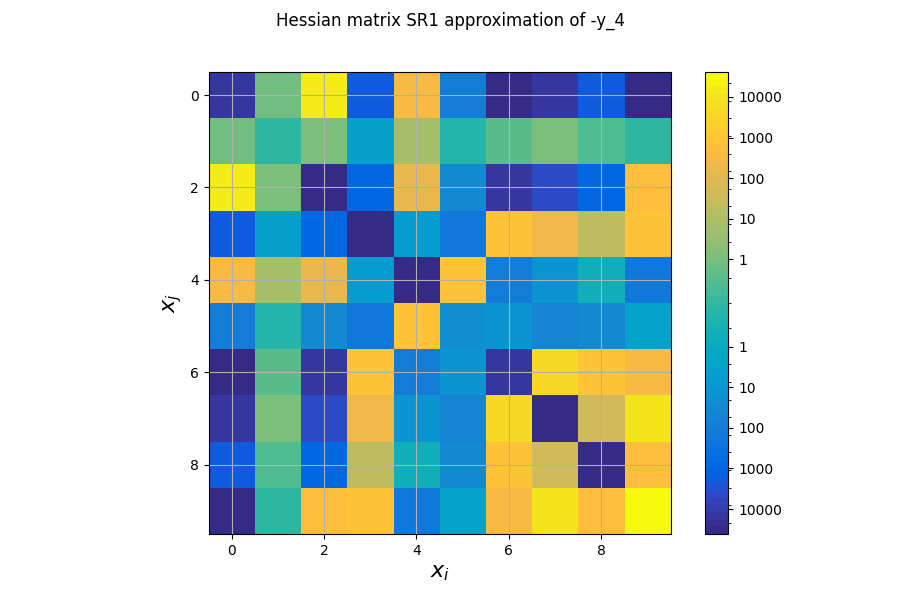

The first plot shows an approximation of the Hessian matrix \(\frac{\partial^2 f}{\partial x_i \partial x_j}\) based on the Symmetric Rank 1 method (SR1) [NW06]. The color map uses a symmetric logarithmic (symlog) scale. This plots the cross influence of the design variables on the objective function or constraints. For instance, on the last figure, the maximal second-order sensitivity is \(\frac{\partial^2 -y_4}{\partial^2 x_0} = 2.10^5\), which means that the \(x_0\) is the most influential variable. Then, the cross derivative \(\frac{\partial^2 -y_4}{\partial x_0 \partial x_2} = 5.10^4\) is positive and relatively high compared to the previous one but the combined effects of \(x_0\) and \(x_2\) are non-negligible in comparison.

scenario.post_process("QuadApprox", function="-y_4", save=False, show=False)

# Workaround for HTML rendering, instead of ``show=True``

plt.show()

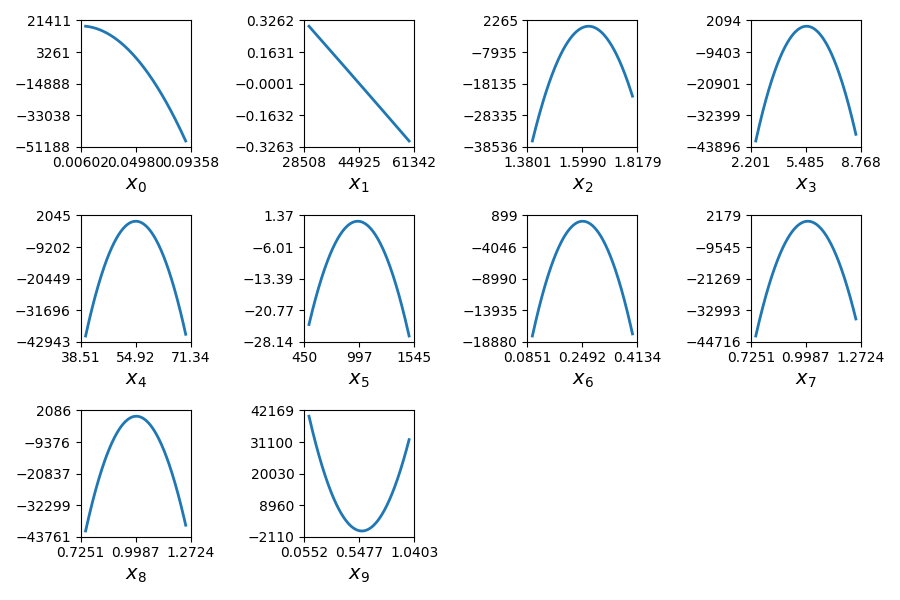

The second plot represents the quadratic approximation of the objective around the optimal solution : \(a_{i}(t)=0.5 (t-x^*_i)^2 \frac{\partial^2 f}{\partial x_i^2} + (t-x^*_i) \frac{\partial f}{\partial x_i} + f(x^*)\), where \(x^*\) is the optimal solution. This approximation highlights the sensitivity of the objective function with respect to the design variables: we notice that the design variables \(x\_1, x\_5, x\_6\) have little influence , whereas \(x\_0, x\_2, x\_9\) have a huge influence on the objective. This trend is also noted in the diagonal terms of the Hessian matrix \(\frac{\partial^2 f}{\partial x_i^2}\).

Total running time of the script: ( 0 minutes 2.209 seconds)