Note

Click here to download the full example code

Analytical test case # 2¶

In this example, we consider a simple optimization problem to illustrate algorithms interfaces and optimization libraries integration.

Imports¶

from __future__ import annotations

from gemseo.algos.design_space import DesignSpace

from gemseo.algos.doe.doe_factory import DOEFactory

from gemseo.algos.opt.opt_factory import OptimizersFactory

from gemseo.algos.opt_problem import OptimizationProblem

from gemseo.api import configure_logger

from gemseo.api import execute_post

from gemseo.core.mdofunctions.mdo_function import MDOFunction

from matplotlib import pyplot as plt

from numpy import cos

from numpy import exp

from numpy import ones

from numpy import sin

configure_logger()

<RootLogger root (INFO)>

Define the objective function¶

We define the objective function \(f(x)=\sin(x)-\exp(x)\)

using a MDOFunction defined by the sum of MDOFunction objects.

f_1 = MDOFunction(sin, name="f_1", jac=cos, expr="sin(x)")

f_2 = MDOFunction(exp, name="f_2", jac=exp, expr="exp(x)")

objective = f_1 - f_2

See also

The following operators are implemented: addition, subtraction and multiplication. The minus operator is also defined.

Define the design space¶

Then, we define the DesignSpace with GEMSEO.

design_space = DesignSpace()

design_space.add_variable("x", 1, l_b=-2.0, u_b=2.0, value=-0.5 * ones(1))

Define the optimization problem¶

Then, we define the OptimizationProblem with GEMSEO.

problem = OptimizationProblem(design_space)

problem.objective = objective

Solve the optimization problem using an optimization algorithm¶

Finally, we solve the optimization problems with GEMSEO interface.

Solve the problem¶

opt = OptimizersFactory().execute(problem, "L-BFGS-B", normalize_design_space=True)

print("Optimum = ", opt)

INFO - 14:47:12: Optimization problem:

INFO - 14:47:12: minimize f_1-f_2 = sin(x)-exp(x)

INFO - 14:47:12: with respect to x

INFO - 14:47:12: over the design space:

INFO - 14:47:12: +------+-------------+-------+-------------+-------+

INFO - 14:47:12: | name | lower_bound | value | upper_bound | type |

INFO - 14:47:12: +------+-------------+-------+-------------+-------+

INFO - 14:47:12: | x | -2 | -0.5 | 2 | float |

INFO - 14:47:12: +------+-------------+-------+-------------+-------+

INFO - 14:47:12: Solving optimization problem with algorithm L-BFGS-B:

INFO - 14:47:12: ... 0%| | 0/999 [00:00<?, ?it]

INFO - 14:47:12: ... 1%| | 7/999 [00:00<00:00, 150853.60 it/sec, obj=[-1.23610834]]

INFO - 14:47:12: Optimization result:

INFO - 14:47:12: Optimizer info:

INFO - 14:47:12: Status: 0

INFO - 14:47:12: Message: CONVERGENCE: NORM_OF_PROJECTED_GRADIENT_<=_PGTOL

INFO - 14:47:12: Number of calls to the objective function by the optimizer: 8

INFO - 14:47:12: Solution:

INFO - 14:47:12: Objective: [-1.23610834]

INFO - 14:47:12: Design space:

INFO - 14:47:12: +------+-------------+--------------------+-------------+-------+

INFO - 14:47:12: | name | lower_bound | value | upper_bound | type |

INFO - 14:47:12: +------+-------------+--------------------+-------------+-------+

INFO - 14:47:12: | x | -2 | -1.292695718944152 | 2 | float |

INFO - 14:47:12: +------+-------------+--------------------+-------------+-------+

Optimum = Optimization result:

Optimizer info:

Status: 0

Message: CONVERGENCE: NORM_OF_PROJECTED_GRADIENT_<=_PGTOL

Number of calls to the objective function by the optimizer: 8

Solution:

Objective: [-1.23610834]

Note that you can get all the optimization algorithms names:

algo_list = OptimizersFactory().algorithms

print("Available algorithms ", algo_list)

Available algorithms ['NLOPT_MMA', 'NLOPT_COBYLA', 'NLOPT_SLSQP', 'NLOPT_BOBYQA', 'NLOPT_BFGS', 'NLOPT_NEWUOA', 'PDFO_COBYLA', 'PDFO_BOBYQA', 'PDFO_NEWUOA', 'PSEVEN', 'PSEVEN_FD', 'PSEVEN_MOM', 'PSEVEN_NCG', 'PSEVEN_NLS', 'PSEVEN_POWELL', 'PSEVEN_QP', 'PSEVEN_SQP', 'PSEVEN_SQ2P', 'PYMOO_GA', 'PYMOO_NSGA2', 'PYMOO_NSGA3', 'PYMOO_UNSGA3', 'PYMOO_RNSGA3', 'DUAL_ANNEALING', 'SHGO', 'DIFFERENTIAL_EVOLUTION', 'LINEAR_INTERIOR_POINT', 'REVISED_SIMPLEX', 'SIMPLEX', 'SLSQP', 'L-BFGS-B', 'TNC', 'SNOPTB']

Save the optimization results¶

We can serialize the results for further exploitation.

problem.export_hdf("my_optim.hdf5")

INFO - 14:47:12: Export optimization problem to file: my_optim.hdf5

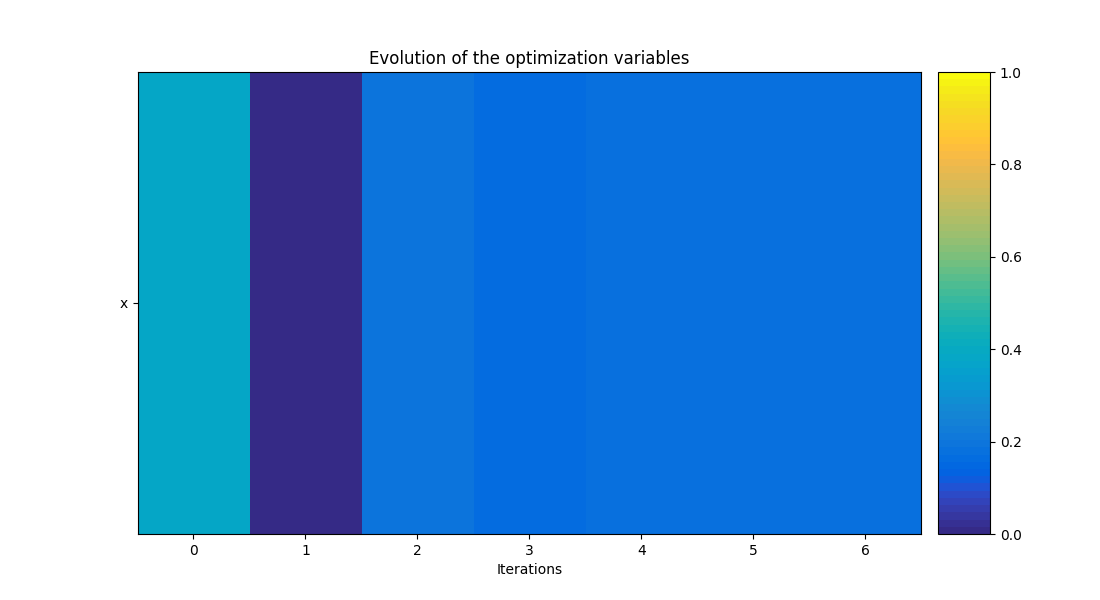

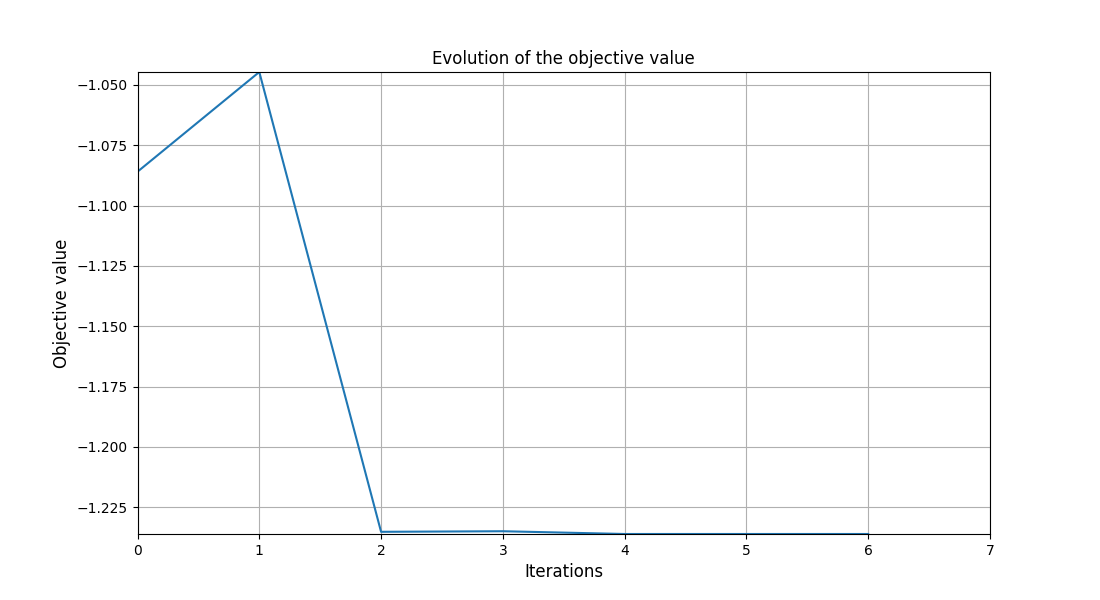

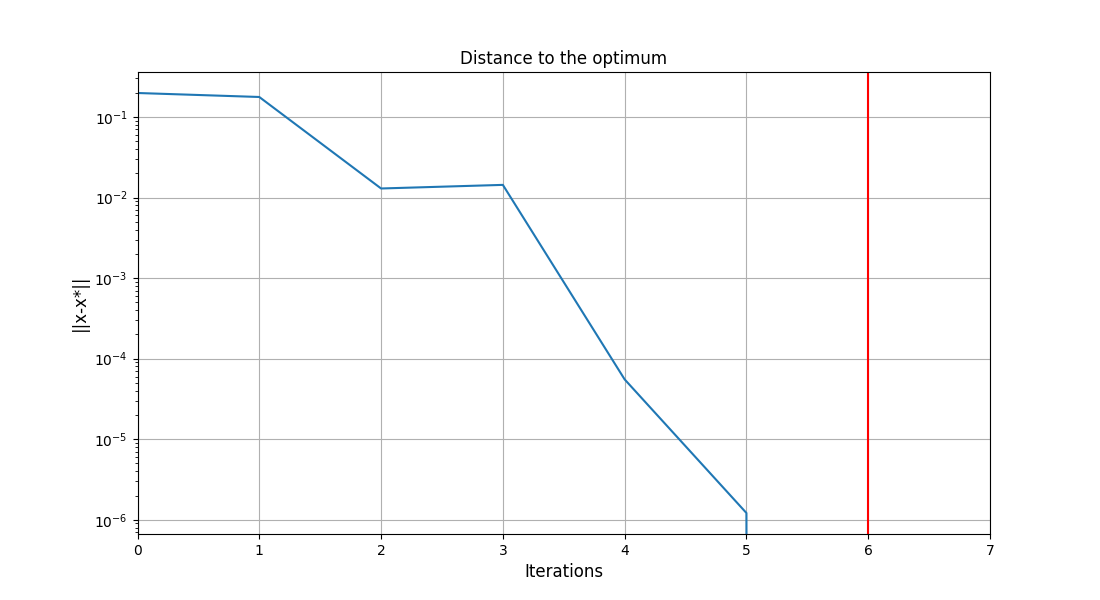

Post-process the results¶

execute_post(problem, "OptHistoryView", show=False, save=False)

# Workaround for HTML rendering, instead of ``show=True``

plt.show()

WARNING - 14:47:12: Failed to create Hessian approximation.

Traceback (most recent call last):

File "/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.1.0/lib/python3.9/site-packages/gemseo/post/opt_history_view.py", line 625, in _create_hessian_approx_plot

_, diag, _, _ = approximator.build_approximation(

File "/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.1.0/lib/python3.9/site-packages/gemseo/post/core/hessians.py", line 382, in build_approximation

x_hist, grad_hist, _, _ = self.get_x_grad_history(

File "/home/docs/checkouts/readthedocs.org/user_builds/gemseo/envs/4.1.0/lib/python3.9/site-packages/gemseo/post/core/hessians.py", line 185, in get_x_grad_history

raise ValueError(

ValueError: Inconsistent gradient and design variables optimization history.

Note

We can also save this plot using the arguments save=False

and file_path='file_path'.

Solve the optimization problem using a DOE algorithm¶

We can also see this optimization problem as a trade-off and solve it by means of a design of experiments (DOE).

opt = DOEFactory().execute(problem, "lhs", n_samples=10, normalize_design_space=True)

print("Optimum = ", opt)

WARNING - 14:47:13: Driver lhs has no option normalize_design_space, option is ignored.

INFO - 14:47:13: Optimization problem:

INFO - 14:47:13: minimize f_1-f_2 = sin(x)-exp(x)

INFO - 14:47:13: with respect to x

INFO - 14:47:13: over the design space:

INFO - 14:47:13: +------+-------------+--------------------+-------------+-------+

INFO - 14:47:13: | name | lower_bound | value | upper_bound | type |

INFO - 14:47:13: +------+-------------+--------------------+-------------+-------+

INFO - 14:47:13: | x | -2 | -1.292695718944152 | 2 | float |

INFO - 14:47:13: +------+-------------+--------------------+-------------+-------+

INFO - 14:47:13: Solving optimization problem with algorithm lhs:

INFO - 14:47:13: ... 0%| | 0/10 [00:00<?, ?it]

INFO - 14:47:13: ... 100%|██████████| 10/10 [00:00<00:00, 3334.64 it/sec, obj=[-1.00069899]]

INFO - 14:47:13: Optimization result:

INFO - 14:47:13: Optimizer info:

INFO - 14:47:13: Status: None

INFO - 14:47:13: Message: None

INFO - 14:47:13: Number of calls to the objective function by the optimizer: 18

INFO - 14:47:13: Solution:

INFO - 14:47:13: Objective: [-5.1741088]

INFO - 14:47:13: Design space:

INFO - 14:47:13: +------+-------------+-------------------+-------------+-------+

INFO - 14:47:13: | name | lower_bound | value | upper_bound | type |

INFO - 14:47:13: +------+-------------+-------------------+-------------+-------+

INFO - 14:47:13: | x | -2 | 1.815526693601343 | 2 | float |

INFO - 14:47:13: +------+-------------+-------------------+-------------+-------+

Optimum = Optimization result:

Optimizer info:

Status: None

Message: None

Number of calls to the objective function by the optimizer: 18

Solution:

Objective: [-5.1741088]

Total running time of the script: ( 0 minutes 0.770 seconds)