Note

Go to the end to download the full example code.

Linear regression#

A LinearRegressor is a linear regression model

based on scikit-learn.

See also

You can find more information about building linear models with scikit-learn on this page.

from __future__ import annotations

from matplotlib import pyplot as plt

from numpy import array

from gemseo import create_design_space

from gemseo import create_discipline

from gemseo import sample_disciplines

from gemseo.mlearning import create_regression_model

Problem#

In this example,

we represent the function \(f(x)=(6x-2)^2\sin(12x-4)\) [FSK08]

by the AnalyticDiscipline

discipline = create_discipline(

"AnalyticDiscipline",

name="f",

expressions={"y": "(6*x-2)**2*sin(12*x-4)"},

)

and seek to approximate it over the input space

input_space = create_design_space()

input_space.add_variable("x", lower_bound=0.0, upper_bound=1.0)

To do this, we create a training dataset with 6 equispaced points:

training_dataset = sample_disciplines(

[discipline], input_space, "y", algo_name="PYDOE_FULLFACT", n_samples=6

)

INFO - 16:21:45: *** Start Sampling execution ***

INFO - 16:21:45: Sampling

INFO - 16:21:45: Disciplines: f

INFO - 16:21:45: MDO formulation: MDF

INFO - 16:21:45: Running the algorithm PYDOE_FULLFACT:

INFO - 16:21:45: 17%|█▋ | 1/6 [00:00<00:00, 646.67 it/sec]

INFO - 16:21:45: 33%|███▎ | 2/6 [00:00<00:00, 1063.46 it/sec]

INFO - 16:21:45: 50%|█████ | 3/6 [00:00<00:00, 1395.78 it/sec]

INFO - 16:21:45: 67%|██████▋ | 4/6 [00:00<00:00, 1650.65 it/sec]

INFO - 16:21:45: 83%|████████▎ | 5/6 [00:00<00:00, 1874.80 it/sec]

INFO - 16:21:45: 100%|██████████| 6/6 [00:00<00:00, 2006.84 it/sec]

INFO - 16:21:45: *** End Sampling execution ***

Basics#

Training#

Then, we train a linear regression model from these samples:

model = create_regression_model("LinearRegressor", training_dataset)

model.learn()

Prediction#

Once it is built, we can predict the output value of \(f\) at a new input point:

input_value = {"x": array([0.65])}

output_value = model.predict(input_value)

output_value

{'y': array([3.29457456])}

as well as its Jacobian value:

jacobian_value = model.predict_jacobian(input_value)

jacobian_value

{'y': {'x': array([[7.26002643]])}}

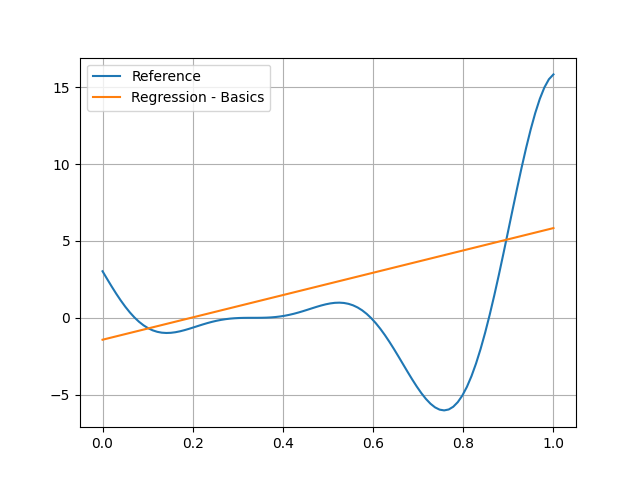

Plotting#

Of course, you can see that the linear model is no good at all here:

test_dataset = sample_disciplines(

[discipline], input_space, "y", algo_name="PYDOE_FULLFACT", n_samples=100

)

input_data = test_dataset.get_view(variable_names=model.input_names).to_numpy()

reference_output_data = test_dataset.get_view(variable_names="y").to_numpy().ravel()

predicted_output_data = model.predict(input_data).ravel()

plt.plot(input_data.ravel(), reference_output_data, label="Reference")

plt.plot(input_data.ravel(), predicted_output_data, label="Regression - Basics")

plt.grid()

plt.legend()

plt.show()

INFO - 16:21:45: *** Start Sampling execution ***

INFO - 16:21:45: Sampling

INFO - 16:21:45: Disciplines: f

INFO - 16:21:45: MDO formulation: MDF

INFO - 16:21:45: Running the algorithm PYDOE_FULLFACT:

INFO - 16:21:45: 1%| | 1/100 [00:00<00:00, 4017.53 it/sec]

INFO - 16:21:45: 2%|▏ | 2/100 [00:00<00:00, 3778.65 it/sec]

INFO - 16:21:45: 3%|▎ | 3/100 [00:00<00:00, 3840.94 it/sec]

INFO - 16:21:45: 4%|▍ | 4/100 [00:00<00:00, 3900.77 it/sec]

INFO - 16:21:45: 5%|▌ | 5/100 [00:00<00:00, 3885.77 it/sec]

INFO - 16:21:45: 6%|▌ | 6/100 [00:00<00:00, 3940.16 it/sec]

INFO - 16:21:45: 7%|▋ | 7/100 [00:00<00:00, 3978.88 it/sec]

INFO - 16:21:45: 8%|▊ | 8/100 [00:00<00:00, 4018.49 it/sec]

INFO - 16:21:45: 9%|▉ | 9/100 [00:00<00:00, 4026.96 it/sec]

INFO - 16:21:45: 10%|█ | 10/100 [00:00<00:00, 4029.88 it/sec]

INFO - 16:21:45: 11%|█ | 11/100 [00:00<00:00, 4057.46 it/sec]

INFO - 16:21:45: 12%|█▏ | 12/100 [00:00<00:00, 4081.05 it/sec]

INFO - 16:21:45: 13%|█▎ | 13/100 [00:00<00:00, 4101.24 it/sec]

INFO - 16:21:45: 14%|█▍ | 14/100 [00:00<00:00, 4088.58 it/sec]

INFO - 16:21:45: 15%|█▌ | 15/100 [00:00<00:00, 4115.02 it/sec]

INFO - 16:21:45: 16%|█▌ | 16/100 [00:00<00:00, 4134.61 it/sec]

INFO - 16:21:45: 17%|█▋ | 17/100 [00:00<00:00, 4157.13 it/sec]

INFO - 16:21:45: 18%|█▊ | 18/100 [00:00<00:00, 4160.56 it/sec]

INFO - 16:21:45: 19%|█▉ | 19/100 [00:00<00:00, 4175.19 it/sec]

INFO - 16:21:45: 20%|██ | 20/100 [00:00<00:00, 4188.65 it/sec]

INFO - 16:21:45: 21%|██ | 21/100 [00:00<00:00, 4198.90 it/sec]

INFO - 16:21:45: 22%|██▏ | 22/100 [00:00<00:00, 4194.11 it/sec]

INFO - 16:21:45: 23%|██▎ | 23/100 [00:00<00:00, 4196.86 it/sec]

INFO - 16:21:45: 24%|██▍ | 24/100 [00:00<00:00, 4206.75 it/sec]

INFO - 16:21:45: 25%|██▌ | 25/100 [00:00<00:00, 4211.15 it/sec]

INFO - 16:21:45: 26%|██▌ | 26/100 [00:00<00:00, 4221.09 it/sec]

INFO - 16:21:45: 27%|██▋ | 27/100 [00:00<00:00, 4216.01 it/sec]

INFO - 16:21:45: 28%|██▊ | 28/100 [00:00<00:00, 4220.08 it/sec]

INFO - 16:21:45: 29%|██▉ | 29/100 [00:00<00:00, 4229.60 it/sec]

INFO - 16:21:45: 30%|███ | 30/100 [00:00<00:00, 4241.67 it/sec]

INFO - 16:21:45: 31%|███ | 31/100 [00:00<00:00, 4239.99 it/sec]

INFO - 16:21:45: 32%|███▏ | 32/100 [00:00<00:00, 4244.84 it/sec]

INFO - 16:21:45: 33%|███▎ | 33/100 [00:00<00:00, 4255.43 it/sec]

INFO - 16:21:45: 34%|███▍ | 34/100 [00:00<00:00, 4266.71 it/sec]

INFO - 16:21:45: 35%|███▌ | 35/100 [00:00<00:00, 4274.92 it/sec]

INFO - 16:21:45: 36%|███▌ | 36/100 [00:00<00:00, 4271.06 it/sec]

INFO - 16:21:45: 37%|███▋ | 37/100 [00:00<00:00, 4280.14 it/sec]

INFO - 16:21:45: 38%|███▊ | 38/100 [00:00<00:00, 4289.35 it/sec]

INFO - 16:21:45: 39%|███▉ | 39/100 [00:00<00:00, 4297.33 it/sec]

INFO - 16:21:45: 40%|████ | 40/100 [00:00<00:00, 4296.12 it/sec]

INFO - 16:21:45: 41%|████ | 41/100 [00:00<00:00, 4301.64 it/sec]

INFO - 16:21:45: 42%|████▏ | 42/100 [00:00<00:00, 4308.48 it/sec]

INFO - 16:21:45: 43%|████▎ | 43/100 [00:00<00:00, 4309.15 it/sec]

INFO - 16:21:45: 44%|████▍ | 44/100 [00:00<00:00, 4314.22 it/sec]

INFO - 16:21:45: 45%|████▌ | 45/100 [00:00<00:00, 4307.74 it/sec]

INFO - 16:21:45: 46%|████▌ | 46/100 [00:00<00:00, 4310.98 it/sec]

INFO - 16:21:45: 47%|████▋ | 47/100 [00:00<00:00, 4313.24 it/sec]

INFO - 16:21:45: 48%|████▊ | 48/100 [00:00<00:00, 4315.96 it/sec]

INFO - 16:21:45: 49%|████▉ | 49/100 [00:00<00:00, 4312.59 it/sec]

INFO - 16:21:45: 50%|█████ | 50/100 [00:00<00:00, 4315.04 it/sec]

INFO - 16:21:45: 51%|█████ | 51/100 [00:00<00:00, 4320.44 it/sec]

INFO - 16:21:45: 52%|█████▏ | 52/100 [00:00<00:00, 4324.71 it/sec]

INFO - 16:21:45: 53%|█████▎ | 53/100 [00:00<00:00, 4322.34 it/sec]

INFO - 16:21:45: 54%|█████▍ | 54/100 [00:00<00:00, 4324.60 it/sec]

INFO - 16:21:45: 55%|█████▌ | 55/100 [00:00<00:00, 4327.51 it/sec]

INFO - 16:21:45: 56%|█████▌ | 56/100 [00:00<00:00, 4331.04 it/sec]

INFO - 16:21:45: 57%|█████▋ | 57/100 [00:00<00:00, 4336.10 it/sec]

INFO - 16:21:45: 58%|█████▊ | 58/100 [00:00<00:00, 4333.11 it/sec]

INFO - 16:21:45: 59%|█████▉ | 59/100 [00:00<00:00, 4335.92 it/sec]

INFO - 16:21:45: 60%|██████ | 60/100 [00:00<00:00, 4337.89 it/sec]

INFO - 16:21:45: 61%|██████ | 61/100 [00:00<00:00, 4311.49 it/sec]

INFO - 16:21:45: 62%|██████▏ | 62/100 [00:00<00:00, 4305.77 it/sec]

INFO - 16:21:45: 63%|██████▎ | 63/100 [00:00<00:00, 4308.30 it/sec]

INFO - 16:21:45: 64%|██████▍ | 64/100 [00:00<00:00, 4312.35 it/sec]

INFO - 16:21:45: 65%|██████▌ | 65/100 [00:00<00:00, 4316.77 it/sec]

INFO - 16:21:45: 66%|██████▌ | 66/100 [00:00<00:00, 4315.93 it/sec]

INFO - 16:21:45: 67%|██████▋ | 67/100 [00:00<00:00, 4316.65 it/sec]

INFO - 16:21:45: 68%|██████▊ | 68/100 [00:00<00:00, 4319.57 it/sec]

INFO - 16:21:45: 69%|██████▉ | 69/100 [00:00<00:00, 4322.02 it/sec]

INFO - 16:21:45: 70%|███████ | 70/100 [00:00<00:00, 4325.43 it/sec]

INFO - 16:21:45: 71%|███████ | 71/100 [00:00<00:00, 4321.77 it/sec]

INFO - 16:21:45: 72%|███████▏ | 72/100 [00:00<00:00, 4324.02 it/sec]

INFO - 16:21:45: 73%|███████▎ | 73/100 [00:00<00:00, 4326.29 it/sec]

INFO - 16:21:45: 74%|███████▍ | 74/100 [00:00<00:00, 4329.39 it/sec]

INFO - 16:21:45: 75%|███████▌ | 75/100 [00:00<00:00, 4327.65 it/sec]

INFO - 16:21:45: 76%|███████▌ | 76/100 [00:00<00:00, 4328.84 it/sec]

INFO - 16:21:45: 77%|███████▋ | 77/100 [00:00<00:00, 4329.30 it/sec]

INFO - 16:21:45: 78%|███████▊ | 78/100 [00:00<00:00, 4331.30 it/sec]

INFO - 16:21:45: 79%|███████▉ | 79/100 [00:00<00:00, 4329.22 it/sec]

INFO - 16:21:45: 80%|████████ | 80/100 [00:00<00:00, 4329.72 it/sec]

INFO - 16:21:45: 81%|████████ | 81/100 [00:00<00:00, 4331.74 it/sec]

INFO - 16:21:45: 82%|████████▏ | 82/100 [00:00<00:00, 4333.45 it/sec]

INFO - 16:21:45: 83%|████████▎ | 83/100 [00:00<00:00, 4336.09 it/sec]

INFO - 16:21:45: 84%|████████▍ | 84/100 [00:00<00:00, 4334.08 it/sec]

INFO - 16:21:45: 85%|████████▌ | 85/100 [00:00<00:00, 4336.07 it/sec]

INFO - 16:21:45: 86%|████████▌ | 86/100 [00:00<00:00, 4338.01 it/sec]

INFO - 16:21:45: 87%|████████▋ | 87/100 [00:00<00:00, 4339.61 it/sec]

INFO - 16:21:45: 88%|████████▊ | 88/100 [00:00<00:00, 4338.61 it/sec]

INFO - 16:21:45: 89%|████████▉ | 89/100 [00:00<00:00, 4340.31 it/sec]

INFO - 16:21:45: 90%|█████████ | 90/100 [00:00<00:00, 4342.73 it/sec]

INFO - 16:21:45: 91%|█████████ | 91/100 [00:00<00:00, 4345.14 it/sec]

INFO - 16:21:45: 92%|█████████▏| 92/100 [00:00<00:00, 4347.60 it/sec]

INFO - 16:21:45: 93%|█████████▎| 93/100 [00:00<00:00, 4344.59 it/sec]

INFO - 16:21:45: 94%|█████████▍| 94/100 [00:00<00:00, 4341.21 it/sec]

INFO - 16:21:45: 95%|█████████▌| 95/100 [00:00<00:00, 4341.22 it/sec]

INFO - 16:21:45: 96%|█████████▌| 96/100 [00:00<00:00, 4342.30 it/sec]

INFO - 16:21:45: 97%|█████████▋| 97/100 [00:00<00:00, 4340.59 it/sec]

INFO - 16:21:45: 98%|█████████▊| 98/100 [00:00<00:00, 4341.84 it/sec]

INFO - 16:21:45: 99%|█████████▉| 99/100 [00:00<00:00, 4343.79 it/sec]

INFO - 16:21:45: 100%|██████████| 100/100 [00:00<00:00, 4291.51 it/sec]

INFO - 16:21:45: *** End Sampling execution ***

Settings#

The LinearRegressor has many options

defined in the LinearRegressor_Settings Pydantic model.

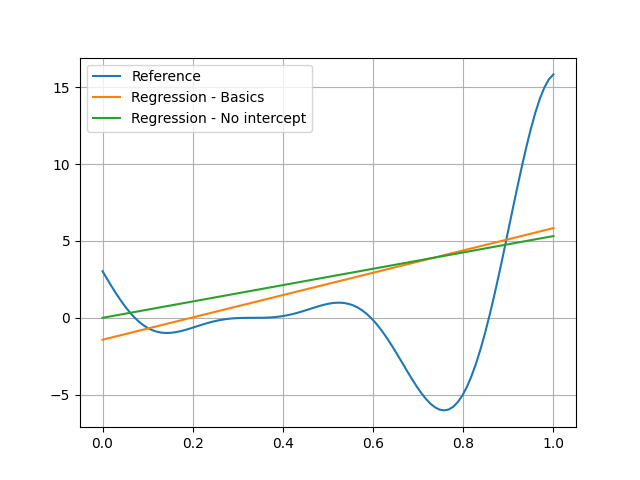

Intercept#

By default,

the linear model is of the form \(a_0+a_1x_1+\ldots+a_dx_d\).

You can set the option fit_intercept to False

if you want a linear model of the form \(a_1x_1+\ldots+a_dx_d\):

model = create_regression_model(

"LinearRegressor", training_dataset, fit_intercept=False, transformer={}

)

model.learn()

Warning

This notion applies in the space of transformed variables.

This is the reason why

we removed the default transformers by setting transformer to {}.

We can see the impact of this option in the following visualization:

predicted_output_data_ = model.predict(input_data).ravel()

plt.plot(input_data.ravel(), reference_output_data, label="Reference")

plt.plot(input_data.ravel(), predicted_output_data, label="Regression - Basics")

plt.plot(input_data.ravel(), predicted_output_data_, label="Regression - No intercept")

plt.grid()

plt.legend()

plt.show()

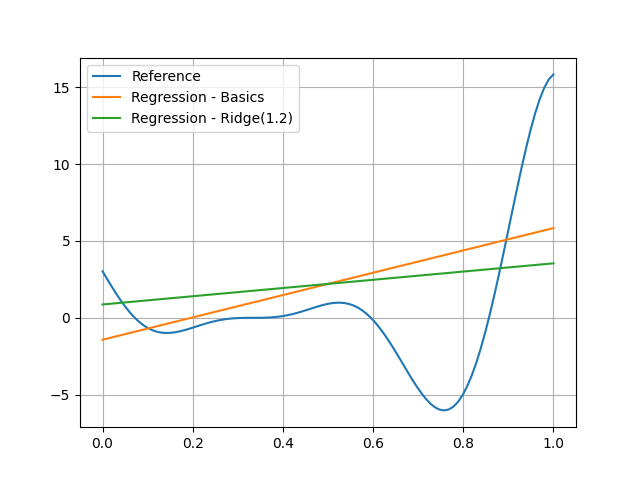

Regularization#

When the number of samples is small relative to the input dimension,

regularization techniques can save you from overfitting

(a model that is very good at learning but bad at generalization).

The penalty_level option is a positive real number

defining the degree of regularization (default: no regularization).

By default,

the regularization technique is the ridge penalty (l2 regularization).

The technique can be replaced by the lasso penalty (l1 regularization)

by setting the l2_penalty_ratio option to 0.0.

When l2_penalty_ratio is between 0 and 1,

the regularization technique is the elastic net penalty,

i.e. a linear combination of ridge and lasso penalty

parametrized by this l2_penalty_ratio.

For example, we can use the ridge penalty with a level of 1.2

model = create_regression_model("LinearRegressor", training_dataset, penalty_level=1.2)

model.learn()

predicted_output_data_ = model.predict(input_data).ravel()

plt.plot(input_data.ravel(), reference_output_data, label="Reference")

plt.plot(input_data.ravel(), predicted_output_data, label="Regression - Basics")

plt.plot(input_data.ravel(), predicted_output_data_, label="Regression - Ridge(1.2)")

plt.grid()

plt.legend()

plt.show()

We can see that the coefficient of the linear model is lower due to the penalty.

Note

In the case of a model with many inputs, we could have used the lasso penalty and seen that some coefficients would have been set to zero.

Total running time of the script: (0 minutes 0.197 seconds)