Note

Go to the end to download the full example code.

Polynomial regression#

A PolynomialRegressor is a polynomial regression model

based on a LinearRegressor.

This design choice was made

because a polynomial regression model is a generalized linear model

whose basis functions are monomials.

Thus,

a PolynomialRegressor benefits

from the same settings as LinearRegressor:

offset can be set to zero and regularization techniques can be used.

See also

You will find more information about these settings in the example about the linear regression model.

from __future__ import annotations

from matplotlib import pyplot as plt

from numpy import array

from gemseo import create_design_space

from gemseo import create_discipline

from gemseo import sample_disciplines

from gemseo.mlearning import create_regression_model

Problem#

In this example,

we represent the function \(f(x)=(6x-2)^2\sin(12x-4)\) [FSK08]

by the AnalyticDiscipline

discipline = create_discipline(

"AnalyticDiscipline",

name="f",

expressions={"y": "(6*x-2)**2*sin(12*x-4)"},

)

and seek to approximate it over the input space

input_space = create_design_space()

input_space.add_variable("x", lower_bound=0.0, upper_bound=1.0)

To do this, we create a training dataset with 6 equispaced points:

training_dataset = sample_disciplines(

[discipline], input_space, "y", algo_name="PYDOE_FULLFACT", n_samples=6

)

INFO - 16:21:48: *** Start Sampling execution ***

INFO - 16:21:48: Sampling

INFO - 16:21:48: Disciplines: f

INFO - 16:21:48: MDO formulation: MDF

INFO - 16:21:48: Running the algorithm PYDOE_FULLFACT:

INFO - 16:21:48: 17%|█▋ | 1/6 [00:00<00:00, 688.61 it/sec]

INFO - 16:21:48: 33%|███▎ | 2/6 [00:00<00:00, 1104.05 it/sec]

INFO - 16:21:48: 50%|█████ | 3/6 [00:00<00:00, 1440.52 it/sec]

INFO - 16:21:48: 67%|██████▋ | 4/6 [00:00<00:00, 1711.26 it/sec]

INFO - 16:21:48: 83%|████████▎ | 5/6 [00:00<00:00, 1943.61 it/sec]

INFO - 16:21:48: 100%|██████████| 6/6 [00:00<00:00, 2064.46 it/sec]

INFO - 16:21:48: *** End Sampling execution ***

Basics#

Training#

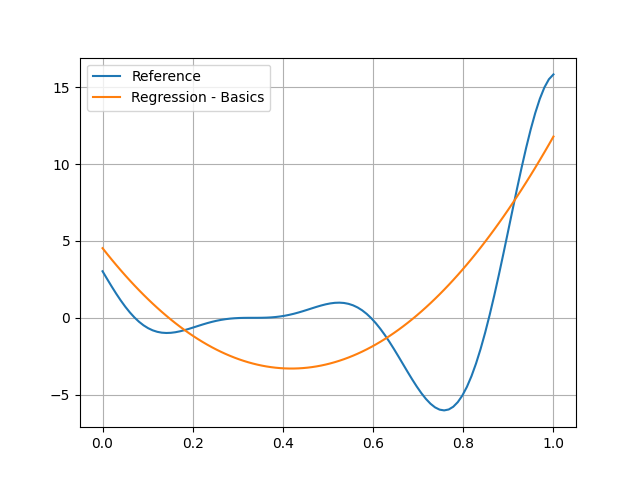

Then, we train a polynomial regression model with a degree of 2 (default) from these samples:

model = create_regression_model("PolynomialRegressor", training_dataset)

model.learn()

Prediction#

Once it is built, we can predict the output value of \(f\) at a new input point:

input_value = {"x": array([0.65])}

output_value = model.predict(input_value)

output_value

{'y': array([-0.90980781])}

as well as its Jacobian value:

jacobian_value = model.predict_jacobian(input_value)

jacobian_value

{'y': {'x': array([[20.65451891]])}}

Plotting#

Of course, you can see that the quadratic model is no good at all here:

test_dataset = sample_disciplines(

[discipline], input_space, "y", algo_name="PYDOE_FULLFACT", n_samples=100

)

input_data = test_dataset.get_view(variable_names=model.input_names).to_numpy()

reference_output_data = test_dataset.get_view(variable_names="y").to_numpy().ravel()

predicted_output_data = model.predict(input_data).ravel()

plt.plot(input_data.ravel(), reference_output_data, label="Reference")

plt.plot(input_data.ravel(), predicted_output_data, label="Regression - Basics")

plt.grid()

plt.legend()

plt.show()

INFO - 16:21:48: *** Start Sampling execution ***

INFO - 16:21:48: Sampling

INFO - 16:21:48: Disciplines: f

INFO - 16:21:48: MDO formulation: MDF

INFO - 16:21:48: Running the algorithm PYDOE_FULLFACT:

INFO - 16:21:48: 1%| | 1/100 [00:00<00:00, 4337.44 it/sec]

INFO - 16:21:48: 2%|▏ | 2/100 [00:00<00:00, 3731.59 it/sec]

INFO - 16:21:48: 3%|▎ | 3/100 [00:00<00:00, 3829.25 it/sec]

INFO - 16:21:48: 4%|▍ | 4/100 [00:00<00:00, 3880.02 it/sec]

INFO - 16:21:48: 5%|▌ | 5/100 [00:00<00:00, 3902.40 it/sec]

INFO - 16:21:48: 6%|▌ | 6/100 [00:00<00:00, 3941.40 it/sec]

INFO - 16:21:48: 7%|▋ | 7/100 [00:00<00:00, 3996.75 it/sec]

INFO - 16:21:48: 8%|▊ | 8/100 [00:00<00:00, 4040.76 it/sec]

INFO - 16:21:48: 9%|▉ | 9/100 [00:00<00:00, 4081.83 it/sec]

INFO - 16:21:48: 10%|█ | 10/100 [00:00<00:00, 4067.40 it/sec]

INFO - 16:21:48: 11%|█ | 11/100 [00:00<00:00, 4096.73 it/sec]

INFO - 16:21:48: 12%|█▏ | 12/100 [00:00<00:00, 4131.98 it/sec]

INFO - 16:21:48: 13%|█▎ | 13/100 [00:00<00:00, 4154.99 it/sec]

INFO - 16:21:48: 14%|█▍ | 14/100 [00:00<00:00, 4148.08 it/sec]

INFO - 16:21:48: 15%|█▌ | 15/100 [00:00<00:00, 4162.39 it/sec]

INFO - 16:21:48: 16%|█▌ | 16/100 [00:00<00:00, 4180.98 it/sec]

INFO - 16:21:48: 17%|█▋ | 17/100 [00:00<00:00, 4197.76 it/sec]

INFO - 16:21:48: 18%|█▊ | 18/100 [00:00<00:00, 4195.00 it/sec]

INFO - 16:21:48: 19%|█▉ | 19/100 [00:00<00:00, 4202.49 it/sec]

INFO - 16:21:48: 20%|██ | 20/100 [00:00<00:00, 4217.50 it/sec]

INFO - 16:21:48: 21%|██ | 21/100 [00:00<00:00, 4222.25 it/sec]

INFO - 16:21:48: 22%|██▏ | 22/100 [00:00<00:00, 4229.29 it/sec]

INFO - 16:21:48: 23%|██▎ | 23/100 [00:00<00:00, 4222.39 it/sec]

INFO - 16:21:48: 24%|██▍ | 24/100 [00:00<00:00, 4231.68 it/sec]

INFO - 16:21:48: 25%|██▌ | 25/100 [00:00<00:00, 4240.44 it/sec]

INFO - 16:21:48: 26%|██▌ | 26/100 [00:00<00:00, 4249.55 it/sec]

INFO - 16:21:48: 27%|██▋ | 27/100 [00:00<00:00, 4246.36 it/sec]

INFO - 16:21:48: 28%|██▊ | 28/100 [00:00<00:00, 4250.47 it/sec]

INFO - 16:21:48: 29%|██▉ | 29/100 [00:00<00:00, 4260.86 it/sec]

INFO - 16:21:48: 30%|███ | 30/100 [00:00<00:00, 4270.03 it/sec]

INFO - 16:21:48: 31%|███ | 31/100 [00:00<00:00, 4280.18 it/sec]

INFO - 16:21:48: 32%|███▏ | 32/100 [00:00<00:00, 4274.45 it/sec]

INFO - 16:21:48: 33%|███▎ | 33/100 [00:00<00:00, 4281.36 it/sec]

INFO - 16:21:48: 34%|███▍ | 34/100 [00:00<00:00, 4288.91 it/sec]

INFO - 16:21:48: 35%|███▌ | 35/100 [00:00<00:00, 4296.44 it/sec]

INFO - 16:21:48: 36%|███▌ | 36/100 [00:00<00:00, 4294.02 it/sec]

INFO - 16:21:48: 37%|███▋ | 37/100 [00:00<00:00, 4299.35 it/sec]

INFO - 16:21:48: 38%|███▊ | 38/100 [00:00<00:00, 4301.27 it/sec]

INFO - 16:21:48: 39%|███▉ | 39/100 [00:00<00:00, 4306.15 it/sec]

INFO - 16:21:48: 40%|████ | 40/100 [00:00<00:00, 4304.39 it/sec]

INFO - 16:21:48: 41%|████ | 41/100 [00:00<00:00, 4304.33 it/sec]

INFO - 16:21:48: 42%|████▏ | 42/100 [00:00<00:00, 4306.58 it/sec]

INFO - 16:21:48: 43%|████▎ | 43/100 [00:00<00:00, 4310.28 it/sec]

INFO - 16:21:48: 44%|████▍ | 44/100 [00:00<00:00, 4316.84 it/sec]

INFO - 16:21:48: 45%|████▌ | 45/100 [00:00<00:00, 4312.86 it/sec]

INFO - 16:21:48: 46%|████▌ | 46/100 [00:00<00:00, 4317.25 it/sec]

INFO - 16:21:48: 47%|████▋ | 47/100 [00:00<00:00, 4322.03 it/sec]

INFO - 16:21:48: 48%|████▊ | 48/100 [00:00<00:00, 4326.16 it/sec]

INFO - 16:21:48: 49%|████▉ | 49/100 [00:00<00:00, 4320.84 it/sec]

INFO - 16:21:48: 50%|█████ | 50/100 [00:00<00:00, 4321.80 it/sec]

INFO - 16:21:48: 51%|█████ | 51/100 [00:00<00:00, 4325.25 it/sec]

INFO - 16:21:48: 52%|█████▏ | 52/100 [00:00<00:00, 4327.46 it/sec]

INFO - 16:21:48: 53%|█████▎ | 53/100 [00:00<00:00, 4328.74 it/sec]

INFO - 16:21:48: 54%|█████▍ | 54/100 [00:00<00:00, 4322.70 it/sec]

INFO - 16:21:48: 55%|█████▌ | 55/100 [00:00<00:00, 4325.57 it/sec]

INFO - 16:21:48: 56%|█████▌ | 56/100 [00:00<00:00, 4324.34 it/sec]

INFO - 16:21:48: 57%|█████▋ | 57/100 [00:00<00:00, 4328.25 it/sec]

INFO - 16:21:48: 58%|█████▊ | 58/100 [00:00<00:00, 4324.79 it/sec]

INFO - 16:21:48: 59%|█████▉ | 59/100 [00:00<00:00, 4325.31 it/sec]

INFO - 16:21:48: 60%|██████ | 60/100 [00:00<00:00, 4327.74 it/sec]

INFO - 16:21:48: 61%|██████ | 61/100 [00:00<00:00, 4302.50 it/sec]

INFO - 16:21:48: 62%|██████▏ | 62/100 [00:00<00:00, 4296.24 it/sec]

INFO - 16:21:48: 63%|██████▎ | 63/100 [00:00<00:00, 4297.44 it/sec]

INFO - 16:21:48: 64%|██████▍ | 64/100 [00:00<00:00, 4299.03 it/sec]

INFO - 16:21:48: 65%|██████▌ | 65/100 [00:00<00:00, 4301.65 it/sec]

INFO - 16:21:48: 66%|██████▌ | 66/100 [00:00<00:00, 4298.04 it/sec]

INFO - 16:21:48: 67%|██████▋ | 67/100 [00:00<00:00, 4298.76 it/sec]

INFO - 16:21:48: 68%|██████▊ | 68/100 [00:00<00:00, 4301.07 it/sec]

INFO - 16:21:48: 69%|██████▉ | 69/100 [00:00<00:00, 4303.96 it/sec]

INFO - 16:21:48: 70%|███████ | 70/100 [00:00<00:00, 4307.34 it/sec]

INFO - 16:21:48: 71%|███████ | 71/100 [00:00<00:00, 4304.59 it/sec]

INFO - 16:21:48: 72%|███████▏ | 72/100 [00:00<00:00, 4307.56 it/sec]

INFO - 16:21:48: 73%|███████▎ | 73/100 [00:00<00:00, 4306.57 it/sec]

INFO - 16:21:48: 74%|███████▍ | 74/100 [00:00<00:00, 4308.54 it/sec]

INFO - 16:21:48: 75%|███████▌ | 75/100 [00:00<00:00, 4305.62 it/sec]

INFO - 16:21:48: 76%|███████▌ | 76/100 [00:00<00:00, 4307.72 it/sec]

INFO - 16:21:48: 77%|███████▋ | 77/100 [00:00<00:00, 4311.21 it/sec]

INFO - 16:21:48: 78%|███████▊ | 78/100 [00:00<00:00, 4314.90 it/sec]

INFO - 16:21:48: 79%|███████▉ | 79/100 [00:00<00:00, 4314.62 it/sec]

INFO - 16:21:48: 80%|████████ | 80/100 [00:00<00:00, 4316.40 it/sec]

INFO - 16:21:48: 81%|████████ | 81/100 [00:00<00:00, 4319.85 it/sec]

INFO - 16:21:48: 82%|████████▏ | 82/100 [00:00<00:00, 4323.64 it/sec]

INFO - 16:21:48: 83%|████████▎ | 83/100 [00:00<00:00, 4327.57 it/sec]

INFO - 16:21:48: 84%|████████▍ | 84/100 [00:00<00:00, 4325.46 it/sec]

INFO - 16:21:48: 85%|████████▌ | 85/100 [00:00<00:00, 4328.38 it/sec]

INFO - 16:21:48: 86%|████████▌ | 86/100 [00:00<00:00, 4330.67 it/sec]

INFO - 16:21:48: 87%|████████▋ | 87/100 [00:00<00:00, 4332.55 it/sec]

INFO - 16:21:48: 88%|████████▊ | 88/100 [00:00<00:00, 4330.82 it/sec]

INFO - 16:21:48: 89%|████████▉ | 89/100 [00:00<00:00, 4332.41 it/sec]

INFO - 16:21:48: 90%|█████████ | 90/100 [00:00<00:00, 4334.80 it/sec]

INFO - 16:21:48: 91%|█████████ | 91/100 [00:00<00:00, 4333.06 it/sec]

INFO - 16:21:48: 92%|█████████▏| 92/100 [00:00<00:00, 4334.81 it/sec]

INFO - 16:21:48: 93%|█████████▎| 93/100 [00:00<00:00, 4332.24 it/sec]

INFO - 16:21:48: 94%|█████████▍| 94/100 [00:00<00:00, 4334.24 it/sec]

INFO - 16:21:48: 95%|█████████▌| 95/100 [00:00<00:00, 4336.97 it/sec]

INFO - 16:21:48: 96%|█████████▌| 96/100 [00:00<00:00, 4339.78 it/sec]

INFO - 16:21:48: 97%|█████████▋| 97/100 [00:00<00:00, 4339.06 it/sec]

INFO - 16:21:48: 98%|█████████▊| 98/100 [00:00<00:00, 4340.28 it/sec]

INFO - 16:21:48: 99%|█████████▉| 99/100 [00:00<00:00, 4342.93 it/sec]

INFO - 16:21:48: 100%|██████████| 100/100 [00:00<00:00, 4294.27 it/sec]

INFO - 16:21:48: *** End Sampling execution ***

Settings#

The PolynomialRegressor has many options

defined in the PolynomialRegressor_Settings Pydantic model.

Most of them are presented

in the example about the linear regression model.

The only one we will look at here is the degree of the polynomial regression model.

This information can be set with the degree keyword.

For example,

we can use a cubic regression model instead of a quadratic one:

model = create_regression_model("PolynomialRegressor", training_dataset, degree=3)

model.learn()

and see that this model seems to be better:

predicted_output_data_ = model.predict(input_data).ravel()

plt.plot(input_data.ravel(), reference_output_data, label="Reference")

plt.plot(input_data.ravel(), predicted_output_data, label="Regression - Basics")

plt.plot(input_data.ravel(), predicted_output_data_, label="Regression - Degree(3)")

plt.grid()

plt.legend()

plt.show()

Total running time of the script: (0 minutes 0.150 seconds)