Note

Click here to download the full example code

Morris analysis¶

from __future__ import annotations

import pprint

from gemseo.algos.parameter_space import ParameterSpace

from gemseo.api import create_discipline

from gemseo.uncertainty.sensitivity.morris.analysis import MorrisAnalysis

from matplotlib import pyplot as plt

from numpy import pi

In this example, we consider a function from \([-\pi,\pi]^3\) to \(\mathbb{R}^3\):

where \(f(a,b,c)=\sin(a)+7\sin(b)^2+0.1*c^4\sin(a)\) is the Ishigami function:

expressions = {

"y1": "sin(x1)+7*sin(x2)**2+0.1*x3**4*sin(x1)",

"y2": "sin(x2)+7*sin(x1)**2+0.1*x3**4*sin(x2)",

}

discipline = create_discipline(

"AnalyticDiscipline", expressions=expressions, name="Ishigami2"

)

Then, we consider the case where the deterministic variables \(x_1\), \(x_2\) and \(x_3\) are replaced with the uncertain variables \(X_1\), \(X_2\) and \(X_3\). The latter are independent and identically distributed according to a uniform distribution between \(-\pi\) and \(\pi\):

space = ParameterSpace()

for variable in ["x1", "x2", "x3"]:

space.add_random_variable(

variable, "OTUniformDistribution", minimum=-pi, maximum=pi

)

From that,

we would like to carry out a sensitivity analysis with the random outputs

\(Y_1=f(X_1,X_2,X_3)\) and \(Y_2=f(X_2,X_1,X_3)\).

For that,

we can compute the correlation coefficients from a MorrisAnalysis:

morris = MorrisAnalysis([discipline], space, 10)

morris.compute_indices()

{'mu': {'y2': [{'x1': array([-0.29766709]), 'x2': array([0.26848457]), 'x3': array([-0.7755748])}], 'y1': [{'x1': array([-0.36000398]), 'x2': array([0.77781853]), 'x3': array([-0.70990541])}]}, 'mu_star': {'y2': [{'x1': array([1.33011973]), 'x2': array([0.38907897]), 'x3': array([1.00221431])}], 'y1': [{'x1': array([0.67947346]), 'x2': array([0.88906579]), 'x3': array([0.72694219])}]}, 'sigma': {'y2': [{'x1': array([1.46392293]), 'x2': array([0.39387241]), 'x3': array([1.38465263])}], 'y1': [{'x1': array([0.98724949]), 'x2': array([0.79064599]), 'x3': array([0.8074493])}]}, 'relative_sigma': {'y2': [{'x1': array([1.10059485]), 'x2': array([1.01231995]), 'x3': array([1.38159335])}], 'y1': [{'x1': array([1.45296254]), 'x2': array([0.88929976]), 'x3': array([1.11074761])}]}, 'min': {'y2': [{'x1': array([0.46488117]), 'x2': array([0.02015985]), 'x3': array([3.93670669e-05])}], 'y1': [{'x1': array([0.0338188]), 'x2': array([0.11821721]), 'x3': array([8.72820113e-05])}]}, 'max': {'y2': [{'x1': array([2.14896136]), 'x2': array([0.85930239]), 'x3': array([3.33216248])}], 'y1': [{'x1': array([2.2360336]), 'x2': array([1.83987522]), 'x3': array([2.12052546])}]}}

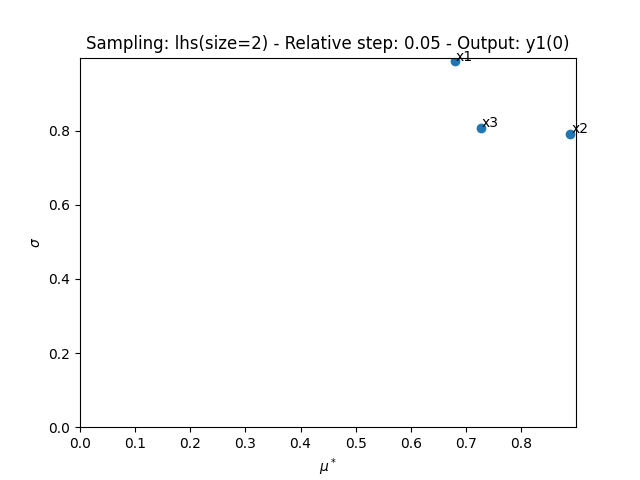

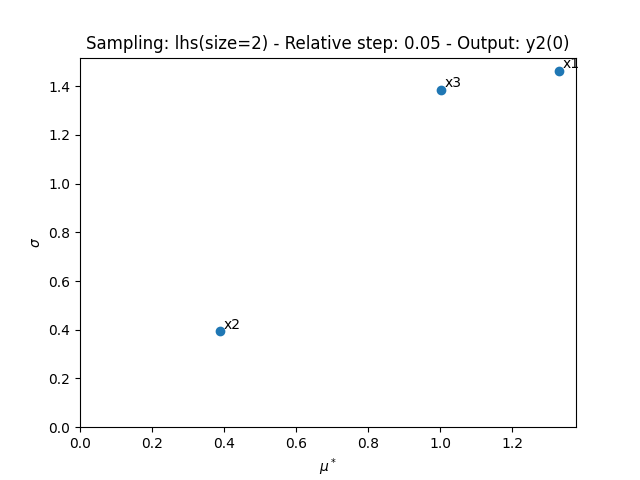

The resulting indices are the empirical means and the standard deviations of the absolute output variations due to input changes.

pprint.pprint(morris.indices)

{'max': {'y1': [{'x1': array([2.2360336]),

'x2': array([1.83987522]),

'x3': array([2.12052546])}],

'y2': [{'x1': array([2.14896136]),

'x2': array([0.85930239]),

'x3': array([3.33216248])}]},

'min': {'y1': [{'x1': array([0.0338188]),

'x2': array([0.11821721]),

'x3': array([8.72820113e-05])}],

'y2': [{'x1': array([0.46488117]),

'x2': array([0.02015985]),

'x3': array([3.93670669e-05])}]},

'mu': {'y1': [{'x1': array([-0.36000398]),

'x2': array([0.77781853]),

'x3': array([-0.70990541])}],

'y2': [{'x1': array([-0.29766709]),

'x2': array([0.26848457]),

'x3': array([-0.7755748])}]},

'mu_star': {'y1': [{'x1': array([0.67947346]),

'x2': array([0.88906579]),

'x3': array([0.72694219])}],

'y2': [{'x1': array([1.33011973]),

'x2': array([0.38907897]),

'x3': array([1.00221431])}]},

'relative_sigma': {'y1': [{'x1': array([1.45296254]),

'x2': array([0.88929976]),

'x3': array([1.11074761])}],

'y2': [{'x1': array([1.10059485]),

'x2': array([1.01231995]),

'x3': array([1.38159335])}]},

'sigma': {'y1': [{'x1': array([0.98724949]),

'x2': array([0.79064599]),

'x3': array([0.8074493])}],

'y2': [{'x1': array([1.46392293]),

'x2': array([0.39387241]),

'x3': array([1.38465263])}]}}

The main indices corresponds to these empirical means

(this main method can be changed with MorrisAnalysis.main_method):

pprint.pprint(morris.main_indices)

{'y1': [{'x1': array([0.67947346]),

'x2': array([0.88906579]),

'x3': array([0.72694219])}],

'y2': [{'x1': array([1.33011973]),

'x2': array([0.38907897]),

'x3': array([1.00221431])}]}

and can be interpreted with respect to the empirical bounds of the outputs:

pprint.pprint(morris.outputs_bounds)

{'y1': [array([-1.42959705]), array([14.89344259])],

'y2': [array([-1.81332358]), array([14.77920445])]}

We can also sort the input parameters by decreasing order of influence and observe that this ranking is not the same for both outputs:

print(morris.sort_parameters("y1"))

print(morris.sort_parameters("y2"))

['x2', 'x3', 'x1']

['x1', 'x3', 'x2']

Lastly,

we can use the method MorrisAnalysis.plot()

to visualize the different series of indices:

morris.plot("y1", save=False, show=False, lower_mu=0, lower_sigma=0)

morris.plot("y2", save=False, show=False, lower_mu=0, lower_sigma=0)

# Workaround for HTML rendering, instead of ``show=True``

plt.show()

Total running time of the script: ( 0 minutes 0.572 seconds)